On Extractive and Abstractive Neural Document Summarization with Transformer Language Models

On Extractive and Abstractive Neural Document Summarization with Transformer Language Models

Sandeep Subramanian Raymond Li Jonathan Pilault Christopher Pal

Abstract

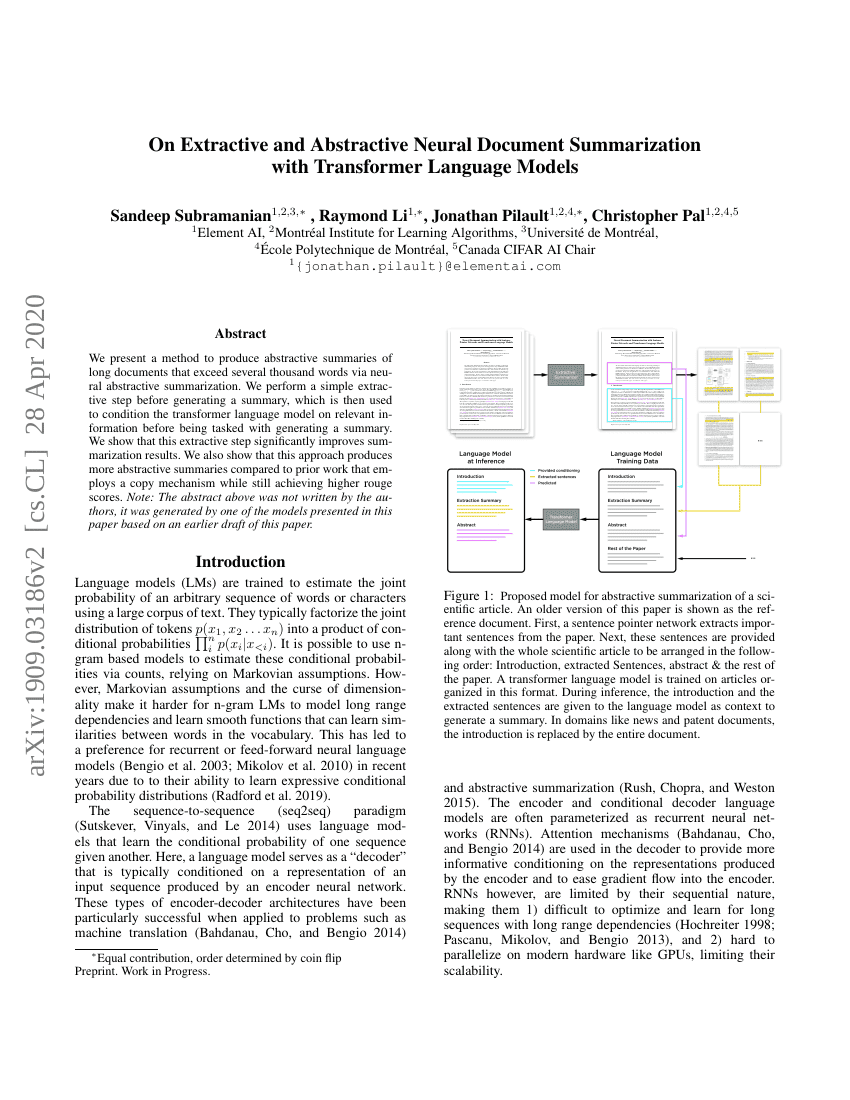

We present a method to produce abstractive summaries of long documents that exceed several thousand words via neural abstractive summarization. We perform a simple extractive step before generating a summary, which is then used to condition the transformer language model on relevant information before being tasked with generating a summary. We show that this extractive step significantly improves summarization results. We also show that this approach produces more abstractive summaries compared to prior work that employs a copy mechanism while still achieving higher rouge scores. Note: The abstract above was not written by the authors, it was generated by one of the models presented in this paper.

Code Repositories

Benchmarks

| Benchmark | Methodology | Metrics |

|---|---|---|

| text-summarization-on-arxiv | TLM-I+E | ROUGE-1: 42.43 |

| text-summarization-on-arxiv | Sent-CLF | ROUGE-1: 34.01 |

| text-summarization-on-arxiv | Sent-PTR | ROUGE-1: 42.32 |

| text-summarization-on-pubmed-1 | Sent-CLF | ROUGE-1: 45.01 |

| text-summarization-on-pubmed-1 | Sent-PTR | ROUGE-1: 43.3 |

| text-summarization-on-pubmed-1 | TLM-I+E | ROUGE-1: 41.43 |

Build AI with AI

From idea to launch — accelerate your AI development with free AI co-coding, out-of-the-box environment and best price of GPUs.