Command Palette

Search for a command to run...

Kevin Lin Lijuan Wang Zicheng Liu

摘要

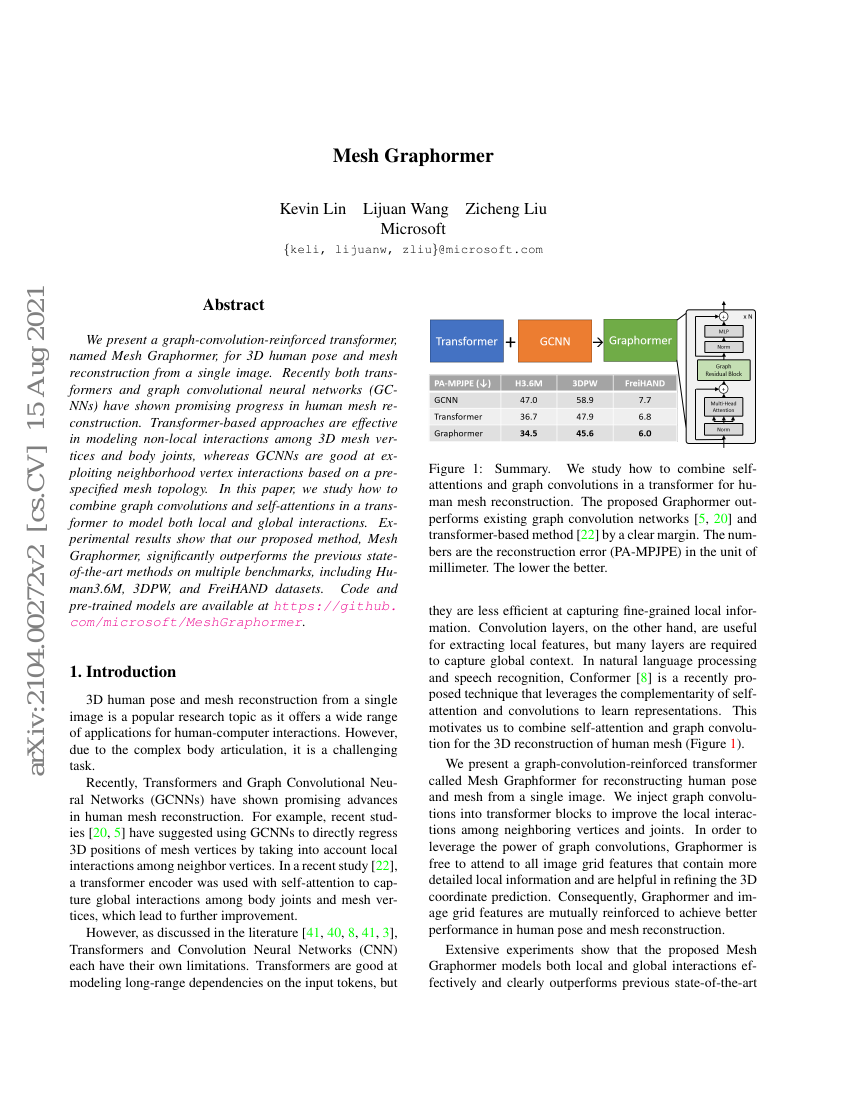

我们提出了一种基于图卷积增强的Transformer模型——Mesh Graphormer,用于从单张图像中实现3D人体姿态与网格重建。近年来,Transformer与图卷积神经网络(GCNN)在人体网格重建任务中均取得了显著进展。基于Transformer的方法在建模3D网格顶点与身体关节之间的非局部交互方面表现出色,而GCNN则擅长利用预定义的网格拓扑结构来捕捉邻域顶点间的局部交互关系。本文旨在探索如何在Transformer架构中融合图卷积与自注意力机制,以同时建模局部与全局交互信息。实验结果表明,所提出的Mesh Graphormer方法在多个基准数据集(包括Human3.6M、3DPW和FreiHAND)上显著优于此前的最先进方法。代码与预训练模型已开源,获取地址为:https://github.com/microsoft/MeshGraphormer。

代码仓库

基准测试

| 基准 | 方法 | 指标 |

|---|---|---|

| 3d-hand-pose-estimation-on-freihand | MeshGraphormer | PA-F@15mm: 0.986 PA-F@5mm: 0.764 PA-MPJPE: 5.9 PA-MPVPE: 6.0 |

| 3d-hand-pose-estimation-on-hint-hand | MeshGraphormer | [email protected] (Ego4D) All: 14.6 [email protected] (Ego4D) Occ: 8.3 [email protected] (Ego4D) Visible: 18.4 [email protected] (New Days) All: 16.8 [email protected] (NewDays) Occ: 7.9 [email protected] (NewDays) Visible: 22.3 [email protected] (VISOR) All: 19.1 [email protected] (VISOR) Occ: 10.9 [email protected] (VISOR) Visible: 23.6 |

| 3d-human-pose-estimation-on-3dpw | Mesh Graphormer | MPJPE: 74.7 MPVPE: 87.7 PA-MPJPE: 45.6 |

| 3d-human-pose-estimation-on-human36m | Mesh Graphormer | Average MPJPE (mm): 51.2 Multi-View or Monocular: Monocular PA-MPJPE: 34.5 |