Command Palette

Search for a command to run...

MobilityBench: A Benchmark for Evaluating Route-Planning Agents in Real-World Mobility Scenarios

MobilityBench: A Benchmark for Evaluating Route-Planning Agents in Real-World Mobility Scenarios

Zhiheng Song Jingshuai Zhang Chuan Qin Chao Wang Chao Chen Longfei Xu Kaikui Liu Xiangxiang Chu Hengshu Zhu

Abstract

Route-planning agents powered by large language models (LLMs) have emerged as a promising paradigm for supporting everyday human mobility through natural language interaction and tool-mediated decision making. However, systematic evaluation in real-world mobility settings is hindered by diverse routing demands, non-deterministic mapping services, and limited reproducibility. In this study, we introduce MobilityBench, a scalable benchmark for evaluating LLM-based route-planning agents in real-world mobility scenarios. MobilityBench is constructed from large-scale, anonymized real user queries collected from Amap and covers a broad spectrum of route-planning intents across multiple cities worldwide. To enable reproducible, end-to-end evaluation, we design a deterministic API-replay sandbox that eliminates environmental variance from live services. We further propose a multi-dimensional evaluation protocol centered on outcome validity, complemented by assessments of instruction understanding, planning, tool use, and efficiency. Using MobilityBench, we evaluate multiple LLM-based route-planning agents across diverse real-world mobility scenarios and provide an in-depth analysis of their behaviors and performance. Our findings reveal that current models perform competently on Basic information retrieval and Route Planning tasks, yet struggle considerably with Preference-Constrained Route Planning, underscoring significant room for improvement in personalized mobility applications. We publicly release the benchmark data, evaluation toolkit, and documentation at https://github.com/AMAP-ML/MobilityBench .

One-sentence Summary

Researchers from CNIC, CAS, and AMAP, Alibaba Group introduce MobilityBench, a scalable, reproducible benchmark for evaluating LLM-based route-planning agents using real-world Amap queries, revealing strengths in basic tasks but persistent gaps in preference-constrained planning for personalized mobility.

Key Contributions

- MobilityBench introduces a scalable, real-world benchmark for evaluating LLM-based route-planning agents, built from anonymized user queries across 350+ global cities and covering diverse intents like multi-waypoint routing, multimodal transit, and preference-aware navigation.

- To ensure reproducibility, the benchmark employs a deterministic API-replay sandbox that caches and replays mapping service responses, eliminating environmental variance from live traffic and service updates during evaluation.

- The evaluation protocol combines outcome validity with assessments of instruction understanding, planning, tool use, and efficiency, revealing that current models perform well on basic tasks but struggle with preference-constrained routing, highlighting gaps in personalized mobility support.

Introduction

The authors leverage large language models to build route-planning agents that can handle complex, real-world mobility requests via natural language and API interactions. Prior benchmarks fall short by focusing on high-level itinerary planning rather than fine-grained, constraint-rich navigation over real map data, and they suffer from non-deterministic live APIs that hinder reproducibility. MobilityBench addresses this by introducing a scalable, real-world benchmark built from anonymized Amap user queries across 350+ cities, paired with a deterministic API-replay sandbox to ensure consistent evaluation. Their multi-dimensional protocol evaluates not just outcome validity but also instruction understanding, planning, tool use, and efficiency—revealing that current models handle basic tasks well but struggle with preference-constrained routing, highlighting a key gap for future work. The benchmark, toolkit, and data are publicly released to support reproducible, extensible research.

Dataset

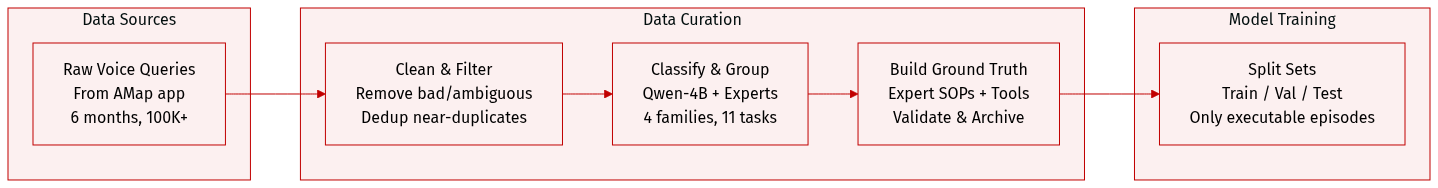

- The authors use MobilityBench, a scalable benchmark built from 100,000 anonymized mobility queries collected over six months from AMap, to evaluate route-planning agents in real-world scenarios.

- Each episode is a four-tuple: (x, z, S, y), where x is a natural-language query, z provides context like user location, S is a replayable API snapshot, and y is a structured ground-truth annotation used only for evaluation.

- Queries are voice-based, transcribed to text, and filtered to remove malformed or ambiguous requests; near-duplicates are deduplicated to ensure diversity.

- Task taxonomy is built using Qwen-4B for intent classification, expanded via open-set labeling, and refined by experts into 11 scenarios grouped into 4 families: Basic Information Retrieval, Route-Dependent Information Retrieval, Basic Route Planning, and Preference-Constrained Route Planning.

- Ground-truth annotations (y) are constructed via expert-defined SOPs, implemented as executable tool programs that extract slots, resolve locations, and invoke routing/weather/traffic tools; outputs are validated and archived for evaluation.

- All tool interactions run through a deterministic replay sandbox that caches AMap API responses; unmatched calls use fallback strategies like fuzzy matching or spatial nearest-neighbor lookup, with strict schema validation.

- The dataset spans 22 countries and 350+ cities, with task distribution: 36.6% Basic Info Retrieval, 9.6% Route-Dependent Info Retrieval, 42.5% Basic Route Planning, and 11.3% Preference-Constrained Route Planning.

- Episodes are filtered to retain only those with verifiable, executable outcomes; agents never see ground truth and cannot ask for clarification, ensuring self-contained, solvable queries.

Experiment

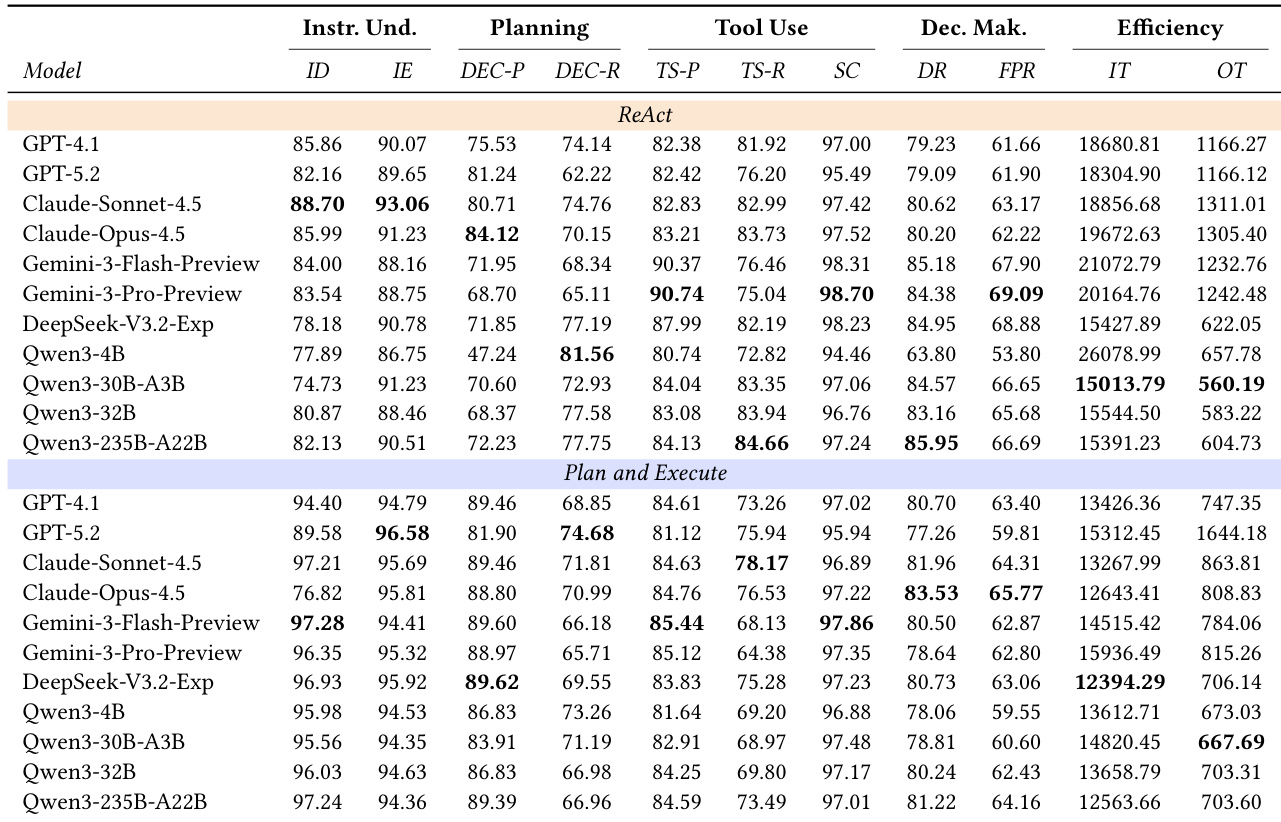

- Introduced a multi-dimensional evaluation protocol breaking down route-planning agents into four core capabilities: Instruction Understanding, Planning, Tool Use, and Decision Making, enabling fine-grained diagnosis beyond end-to-end success rates.

- Evaluated agents across 7,098 real-world mobility episodes using diverse LLMs (open-source and closed-source) under two frameworks: ReAct and Plan-and-Execute.

- Claude-Opus-4.5 and Gemini-3-Pro-Preview led in overall performance; open-source models like Qwen3-235B-A22B and DeepSeek-V3.2-Exp showed strong competitiveness, especially in cost-efficiency.

- ReAct outperformed Plan-and-Execute in task success due to its dynamic feedback loop, but incurred ~35% higher input token usage, increasing computational cost.

- Plan-and-Execute excelled in preference-constrained, logic-heavy scenarios by enforcing upfront planning, reducing deviation and hallucination.

- Larger models consistently improved performance, following scaling laws, with MoE architectures offering notable gains; larger models also generated longer, more exhaustive plans.

- Enabling “Thinking” mode boosted final pass rates across models (up to +6% for Qwen-30B-A3B) but significantly increased token output and latency, limiting real-time deployment viability.

The authors use a multi-dimensional evaluation protocol to assess route-planning agents across instruction understanding, planning, tool use, decision making, and efficiency, revealing that closed-source models like Claude-Opus-4.5 and Gemini-3-Pro-Preview generally lead in task success, while large open-source models such as Qwen3-235B-A22B show competitive performance with lower computational cost. Results show that the ReAct framework achieves higher final pass rates due to its dynamic feedback loop but incurs significantly higher token usage, whereas Plan-and-Execute offers better efficiency at the cost of reduced robustness in complex scenarios. Model scaling and reasoning modes both improve performance, with larger models and enabled thinking yielding higher success rates, though at the expense of increased latency and resource consumption.