Command Palette

Search for a command to run...

Using Learning Progressions to Guide AI Feedback for Science Learning

Using Learning Progressions to Guide AI Feedback for Science Learning

Xin Xia Nejla Yuruk Yun Wang Xiaoming Zhai

Abstract

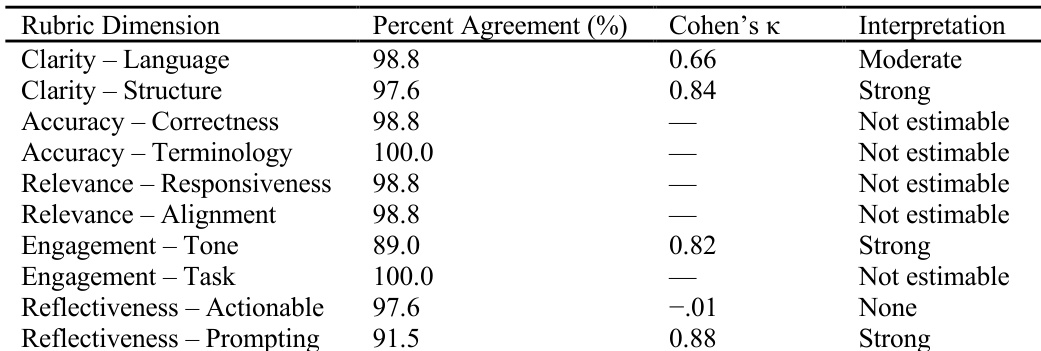

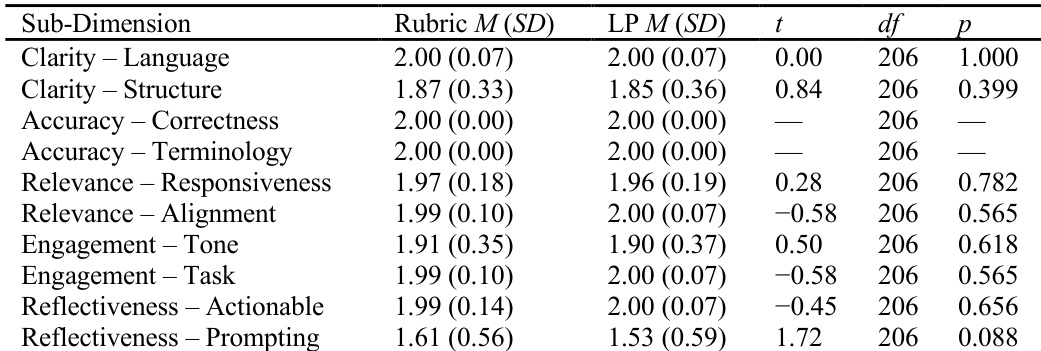

Generative artificial intelligence (AI) offers scalable support for formative feedback, yet most AI-generated feedback relies on task-specific rubrics authored by domain experts. While effective, rubric authoring is time-consuming and limits scalability across instructional contexts. Learning progressions (LP) provide a theoretically grounded representation of students' developing understanding and may offer an alternative solution. This study examines whether an LP-driven rubric generation pipeline can produce AI-generated feedback comparable in quality to feedback guided by expert-authored task rubrics. We analyzed AI-generated feedback for written scientific explanations produced by 207 middle school students in a chemistry task. Two pipelines were compared: (a) feedback guided by a human expert-designed, task-specific rubric, and (b) feedback guided by a task-specific rubric automatically derived from a learning progression prior to grading and feedback generation. Two human coders evaluated feedback quality using a multi-dimensional rubric assessing Clarity, Accuracy, Relevance, Engagement and Motivation, and Reflectiveness (10 sub-dimensions). Inter-rater reliability was high, with percent agreement ranging from 89% to 100% and Cohen's kappa values for estimable dimensions (kappa = .66 to .88). Paired t-tests revealed no statistically significant differences between the two pipelines for Clarity (t1 = 0.00, p1 = 1.000; t2 = 0.84, p2 = .399), Relevance (t1 = 0.28, p1 = .782; t2 = -0.58, p2 = .565), Engagement and Motivation (t1 = 0.50, p1 = .618; t2 = -0.58, p2 = .565), or Reflectiveness (t = -0.45, p = .656). These findings suggest that the LP-driven rubric pipeline can serve as an alternative solution.

One-sentence Summary

Researchers from the University of Georgia and Gazi University propose an LP-driven rubric pipeline that generates AI feedback for middle school chemistry explanations as effectively as expert-authored rubrics, enabling scalable, theory-grounded formative assessment without task-specific human rubric design.

Key Contributions

- The study addresses the scalability bottleneck in AI-generated feedback by replacing labor-intensive expert-authored rubrics with rubrics automatically derived from learning progressions, which map students’ conceptual development in science.

- It introduces an LP-driven pipeline that generates feedback for middle school chemistry explanations and compares its quality against expert-rubric-guided feedback across five dimensions using human coder evaluations of 207 student responses.

- No statistically significant differences were found between the two feedback pipelines across Clarity, Relevance, Engagement and Motivation, or Reflectiveness, supporting LP-derived rubrics as a viable, scalable alternative to expert-designed ones.

Introduction

The authors leverage learning progressions (LPs) — empirically grounded models of how students’ understanding develops — to automatically generate task-specific rubrics for AI feedback in science education. This addresses a key bottleneck in current AI feedback systems, which rely on time-intensive, expert-authored rubrics that limit scalability across diverse classroom tasks. While prior work shows AI can generate useful feedback when guided by detailed rubrics, building those rubrics for every new task is impractical. The authors demonstrate that LP-derived rubrics produce AI feedback statistically indistinguishable in quality from expert-authored ones across dimensions like clarity, relevance, and reflectiveness — suggesting LPs can serve as a reusable pedagogical backbone to automate rubric creation and scale feedback without sacrificing quality.

Dataset

-

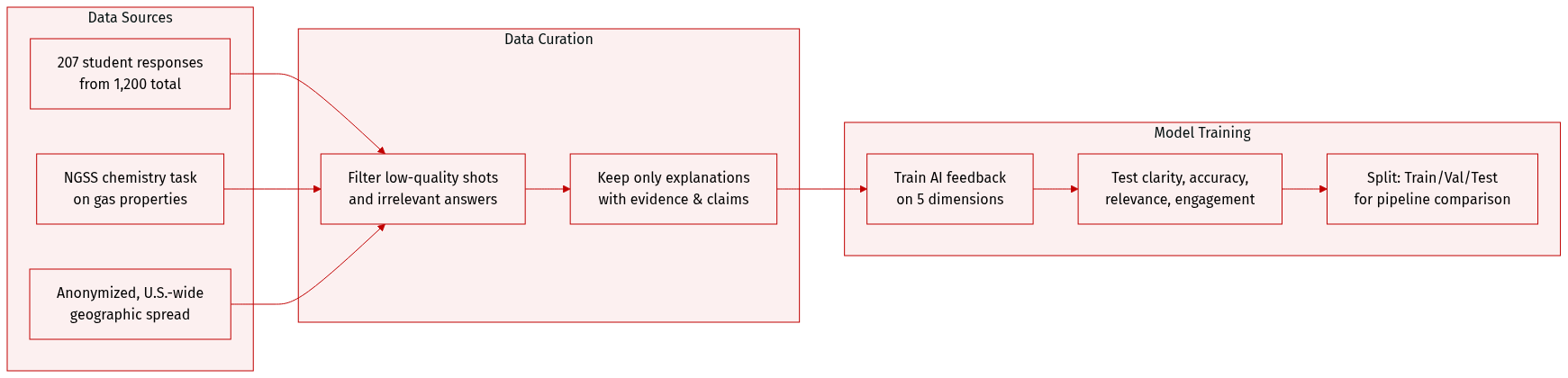

The authors use 207 anonymized middle school student responses drawn randomly from a larger pool of 1,200 responses collected via an NGSS-aligned online assessment system. No demographic data is available due to anonymization, but the sample reflects a broad U.S. geographic distribution.

-

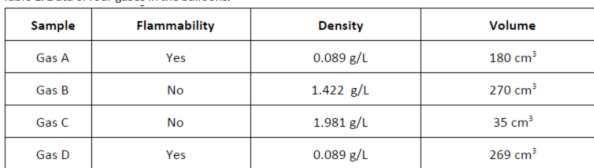

All responses stem from a single open-ended chemistry task focused on gas properties, sourced from the Next Generation Science Assessment task set. Students analyzed data on flammability, volume, and density across four gas samples and explained which gases could be the same, justifying their reasoning with evidence.

-

The task is designed to assess scientific explanation skills—specifically, connecting evidence to claims using appropriate terminology—and serves as the sole context for evaluating AI-generated formative feedback.

-

Feedback evaluation focuses on five dimensions: Clarity, Accuracy, Relevance, Engagement and Motivation, and Reflectiveness. The dataset is used exclusively to test how different AI feedback pipelines respond to student explanations and to compare feedback quality across these dimensions.

Method

The authors leverage a unified large language model—GPT-5.1—to generate feedback across both evaluation pipelines, ensuring methodological consistency. For each student response, the model is prompted to perform two core tasks: first, to evaluate the response against a specified rubric, and second, to produce formative feedback that directly aligns with the evaluation outcome. The feedback is intentionally crafted to be developmentally appropriate, supportive in tone, and pedagogically focused on guiding students toward improved scientific explanation skills.

To isolate the impact of rubric origin on feedback quality, both pipelines employ identical prompting strategies and output constraints. The only variable introduced is the source of the rubric—either human-authored or derived from a learning progression framework. This controlled design enables a direct comparison of how rubric provenance influences the quality and utility of the generated feedback.

As shown in the figure below:

Experiment

- Gas-filled balloon experiment validated measurement of gas properties under controlled conditions, focusing on flammability, volume, mass, and density.

- Two AI feedback pipelines (Expert-Rubric and Learning-Progression) were compared using a within-subjects design; both produced high-quality feedback across all dimensions.

- Feedback quality was assessed via a 5-dimension rubric (Clarity, Accuracy, Relevance, Engagement, Reflectiveness); both pipelines scored near ceiling, with perfect accuracy in scientific content.

- No statistically significant differences were found between the two pipelines across any feedback dimension, indicating equivalent effectiveness.

- Reflectiveness prompting showed slightly lower and more variable scores, suggesting room for improvement in encouraging student reflection.

- Results confirm that structured, task-aligned AI feedback can reliably deliver scientifically accurate, clear, and motivating guidance at scale.

The authors use a multi-dimensional rubric to evaluate AI-generated feedback across five quality dimensions, with human coders achieving high percent agreement and moderate to strong inter-rater reliability for most dimensions. Results show that both expert-rubric and learning-progression pipelines produce consistently high-quality feedback, with no statistically significant differences between them across any evaluable sub-dimension. Feedback was uniformly accurate, clear, relevant, and engaging, though reflectiveness prompting showed greater variability in quality.

The authors compared two AI feedback pipelines—one using expert-designed rubrics and the other using learning progression-derived criteria—and found no statistically significant differences in feedback quality across any evaluated dimension. Both approaches consistently produced high-quality, scientifically accurate, and pedagogically sound feedback under controlled conditions. Results suggest that structuring AI feedback with either expert or progression-based criteria can yield similarly effective outcomes for student support.

The authors use a controlled experiment to compare gas properties across four samples, measuring flammability, density, and volume under identical conditions. Results show that flammability does not correlate with density or volume, as both flammable and non-flammable gases appear across the full range of measured values. The data indicate that these physical properties must be evaluated independently to characterize each gas accurately.