Command Palette

Search for a command to run...

IndexCache: Accelerating Sparse Attention via Cross-Layer Index Reuse

IndexCache: Accelerating Sparse Attention via Cross-Layer Index Reuse

Yushi Bai Qian Dong Ting Jiang Xin Lv Zhengxiao Du Aohan Zeng Jie Tang Juanzi Li

Abstract

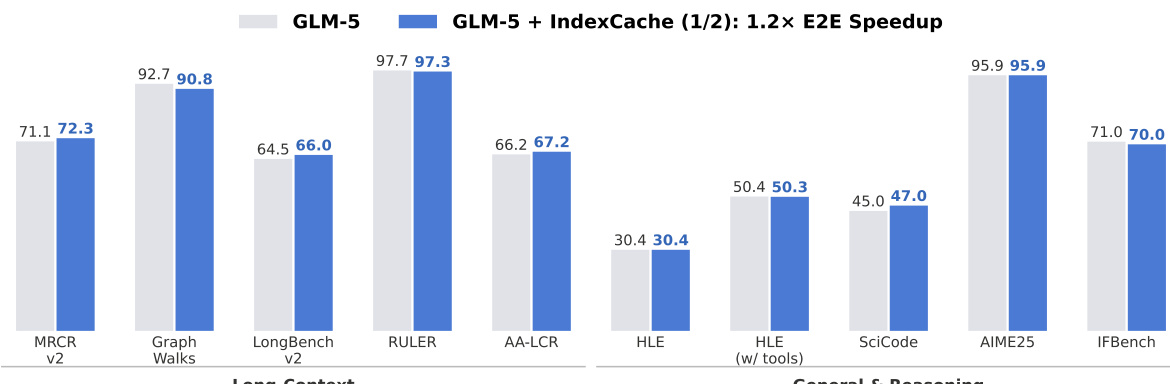

Long-context agentic workflows have emerged as a defining use case for large language models, making attention efficiency critical for both inference speed and serving cost. Sparse attention addresses this challenge effectively, and DeepSeek Sparse Attention (DSA) is a representative production-grade solution: a lightweight lightning indexer selects the top-k most relevant tokens per query, reducing core attention from O(L2) to O(Lk). However, the indexer itself retains O(L2) complexity and must run independently at every layer, despite the fact that the resulting top-k selections are highly similar across consecutive layers. We present IndexCache, which exploits this cross-layer redundancy by partitioning layers into a small set of Full layers that run their own indexers and a majority of Shared layers that simply reuse the nearest Full layer's top-k indices. We propose two complementary approaches to determine and optimize this configuration. Training-free IndexCache applies a greedy search algorithm that selects which layers to retain indexers by directly minimizing language modeling loss on a calibration set, requiring no weight updates. Training-aware IndexCache introduces a multi-layer distillation loss that trains each retained indexer against the averaged attention distributions of all layers it serves, enabling even simple interleaved patterns to match full-indexer accuracy. Experimental results on a 30B DSA model show that IndexCache can remove 75% of indexer computations with negligible quality degradation, achieving up to 1.82imes prefill speedup and 1.48imes decode speedup compared to standard DSA. These positive results are further confirmed by our preliminary experiments on the production-scale GLM-5 model (Figure 1).

One-sentence Summary

Researchers from Tsinghua University and Z.ai introduce IndexCache, a technique that optimizes DeepSeek Sparse Attention by exploiting cross-layer redundancy to share token indices. This approach eliminates up to 75% of indexer computations in long-context workflows, delivering significant inference speedups without requiring model retraining or degrading output quality.

Key Contributions

- Long-context agentic workflows rely on DeepSeek Sparse Attention to reduce core attention complexity, yet the required lightning indexer still incurs quadratic O(L2) cost at every layer, creating a significant bottleneck for inference speed and serving costs.

- IndexCache addresses this redundancy by partitioning layers into Full layers that compute indices and Shared layers that reuse the nearest Full layer's top-k selections, utilizing either a training-free greedy search or a training-aware multi-layer distillation loss to optimize the configuration.

- Experiments on a 30B DSA model demonstrate that IndexCache removes 75% of indexer computations with negligible quality degradation, achieving up to 1.82x prefetch and 1.48x decode speedups while maintaining performance across nine long-context and reasoning benchmarks.

Introduction

Large language models face a critical bottleneck in long-context inference due to the quadratic complexity of self-attention, which sparse mechanisms like DeepSeek Sparse Attention (DSA) address by selecting only the most relevant tokens. While DSA reduces core attention costs, its reliance on a lightweight indexer at every layer still incurs quadratic overhead that dominates latency during the prefill stage. The authors leverage the observation that token selection patterns remain highly stable across consecutive layers to introduce IndexCache, a method that eliminates up to 75% of indexer computations by reusing indices from a small subset of retained layers. They propose both a training-free approach using greedy layer selection and a training-aware strategy with multi-layer distillation to maintain model quality while achieving significant speedups in long-context scenarios.

Method

The authors leverage the observation that sparse attention indexers exhibit significant redundancy across consecutive layers to reduce computational overhead. In standard DeepSeek Sparse Attention, a lightweight lightning indexer scores all preceding tokens at every layer to select the top-k positions. While this reduces core attention complexity from O(L2) to O(Lk), the indexer itself retains O(L2) complexity. IndexCache addresses this by partitioning the N transformer layers into two categories: Full layers and Shared layers. Full layers retain their indexers to compute fresh top-k sets, while Shared layers skip the indexer forward pass and reuse the index set from the nearest preceding Full layer. This design allows the system to eliminate a large fraction of the total indexer cost with minimal architectural changes.

To determine the optimal configuration of Full and Shared layers without retraining, the authors propose a training-free greedy search algorithm. The process begins with all layers designated as Full. The algorithm iteratively evaluates the language modeling loss on a calibration set for each candidate layer conversion. At each step, the layer whose conversion to Shared status results in the lowest loss increase is selected. This data-driven approach identifies which indexers are expendable based on their intrinsic importance to the model's performance rather than relying on uniform interleaving patterns.

For models trained from scratch or via continued pre-training, a training-aware approach further optimizes the indexer parameters for cross-layer sharing. Standard training distills the indexer against the attention distribution of its own layer. IndexCache generalizes this by introducing a multi-layer distillation loss. This objective encourages the retained indexer to predict a top-k set that is jointly useful for itself and all subsequent Shared layers it serves. The loss function is defined as:

LmultiI=j=0∑mm+11t∑DKL(pt(ℓ+j)qt(ℓ)),where pt(ℓ+j) represents the aggregated attention distribution at layer ℓ+j and qt(ℓ) is the indexer's output distribution. Theoretical analysis shows that this multi-layer loss produces gradients equivalent to distilling against the averaged attention distribution of all served layers. This ensures the indexer learns a consensus top-k selection that covers important tokens across the entire group of layers.

Experimental evaluations on a 30B parameter model demonstrate the efficiency gains achieved by removing indexer computations. The method successfully eliminates up to 75% of indexer costs while maintaining comparable quality. Performance metrics regarding prefill time and decode throughput are summarized below.

The results confirm that IndexCache delivers significant speedups in both prefill and decode phases without degrading model capabilities.

Experiment

- End-to-end inference experiments demonstrate that IndexCache significantly accelerates both prefill latency and decode throughput for long-context scenarios, with speedups increasing as context length grows, while maintaining comparable performance on general reasoning tasks.

- Training-free IndexCache evaluations reveal that greedy-searched sharing patterns are essential for preserving long-context accuracy at aggressive retention ratios, whereas uniform interleaving causes substantial degradation; however, general reasoning capabilities remain robust across most configurations.

- Training-aware IndexCache results show that retraining the model to adapt to index sharing eliminates the sensitivity to specific patterns, allowing simple uniform interleaving to match full-indexer performance and confirming the effectiveness of cross-layer distillation.

- Scaling experiments on a 744B-parameter model validate that the trends observed in smaller models hold true, with searched patterns providing stable quality recovery even at high sparsity levels.

- Analysis of cross-layer index overlap confirms high redundancy between adjacent layers but reveals that local similarity metrics fail to identify optimal sharing patterns, necessitating end-to-end loss-based search to prevent cascading errors in deep networks.