Command Palette

Search for a command to run...

VideoDetective: Clue Hunting via both Extrinsic Query and Intrinsic Relevance for Long Video Understanding

VideoDetective: Clue Hunting via both Extrinsic Query and Intrinsic Relevance for Long Video Understanding

Ruoliu Yang Chu Wu Caifeng Shan Ran He Chaoyou Fu

Abstract

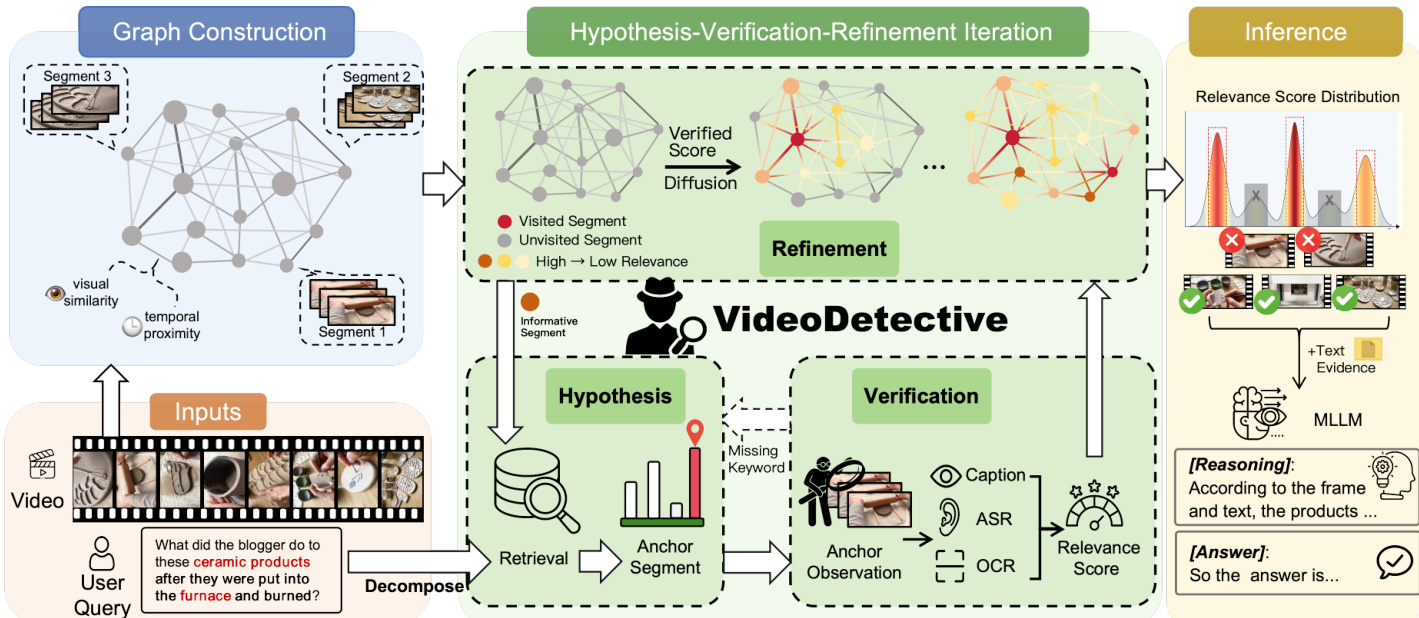

Long video understanding remains challenging for multimodal large language models (MLLMs) due to limited context windows, which necessitate identifying sparse query-relevant video segments. However, existing methods predominantly localize clues based solely on the query, overlooking the video's intrinsic structure and varying relevance across segments. To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long-video question answering. Specifically, we divide a video into various segments and represent them as a visual-temporal affinity graph built from visual similarity and temporal proximity. We then perform a Hypothesis-Verification-Refinement loop to estimate relevance scores of observed segments to the query and propagate them to unseen segments, yielding a global relevance distribution that guides the localization of the most critical segments for final answering with sparse observation. Experiments show our method consistently achieves substantial gains across a wide range of mainstream MLLMs on representative benchmarks, with accuracy improvements of up to 7.5% on VideoMME-long. Our code is available at https://videodetective.github.io/

One-sentence Summary

Researchers from Nanjing University and the Chinese Academy of Sciences propose VideoDetective, a framework that enhances long video understanding by constructing visual-temporal affinity graphs and employing a Hypothesis-Verification-Refinement loop to localize sparse query-relevant segments, achieving significant accuracy gains on benchmarks like VideoMME-long.

Key Contributions

- The paper introduces VideoDetective, a long-video inference framework that models videos as a Spatio-Temporal Affinity Graph to integrate extrinsic query relevance with intrinsic visual and temporal correlations for effective clue localization.

- This work implements a Hypothesis-Verification-Refinement loop that utilizes graph diffusion to propagate sparse relevance scores from observed anchor segments, dynamically updating a global belief field to recover semantic information from limited observations.

- Experimental results demonstrate that the method acts as a plug-and-play solution that consistently improves performance across diverse MLLM backbones, achieving accuracy gains of up to 7.5% on the VideoMME-long benchmark.

Introduction

Long video understanding is critical for deploying Multimodal Large Language Models (MLLMs) on real-world content, yet these systems struggle with limited context windows that force them to identify sparse, query-relevant segments. Prior approaches rely on unidirectional query-to-video matching or simple sampling, which often overlook the video's intrinsic temporal structure and causal continuity, leading to missed clues and poor reasoning. To address this, the authors propose VideoDetective, a framework that models videos as Spatio-Temporal Affinity Graphs to jointly leverage extrinsic query relevance and intrinsic inter-segment correlations. By executing a Hypothesis-Verification-Refinement loop with graph diffusion, the method propagates relevance scores from observed segments to unseen ones, enabling accurate clue localization and significant accuracy gains across diverse MLLM backbones.

Dataset

- The authors estimate lower bounds for token consumption using official sampling rates, per-frame token counts from API documentation, and standard video resolution settings.

- This analysis serves as a baseline for models including Gemini-1.5-Pro, GPT-4o, and LLaVA-Video-72B rather than describing a specific training dataset composition.

- No training splits, mixture ratios, or filtering rules are defined in this section as the focus is on theoretical efficiency metrics.

- The text does not detail cropping strategies or metadata construction but relies on standard resolution configurations to calculate token usage.

Method

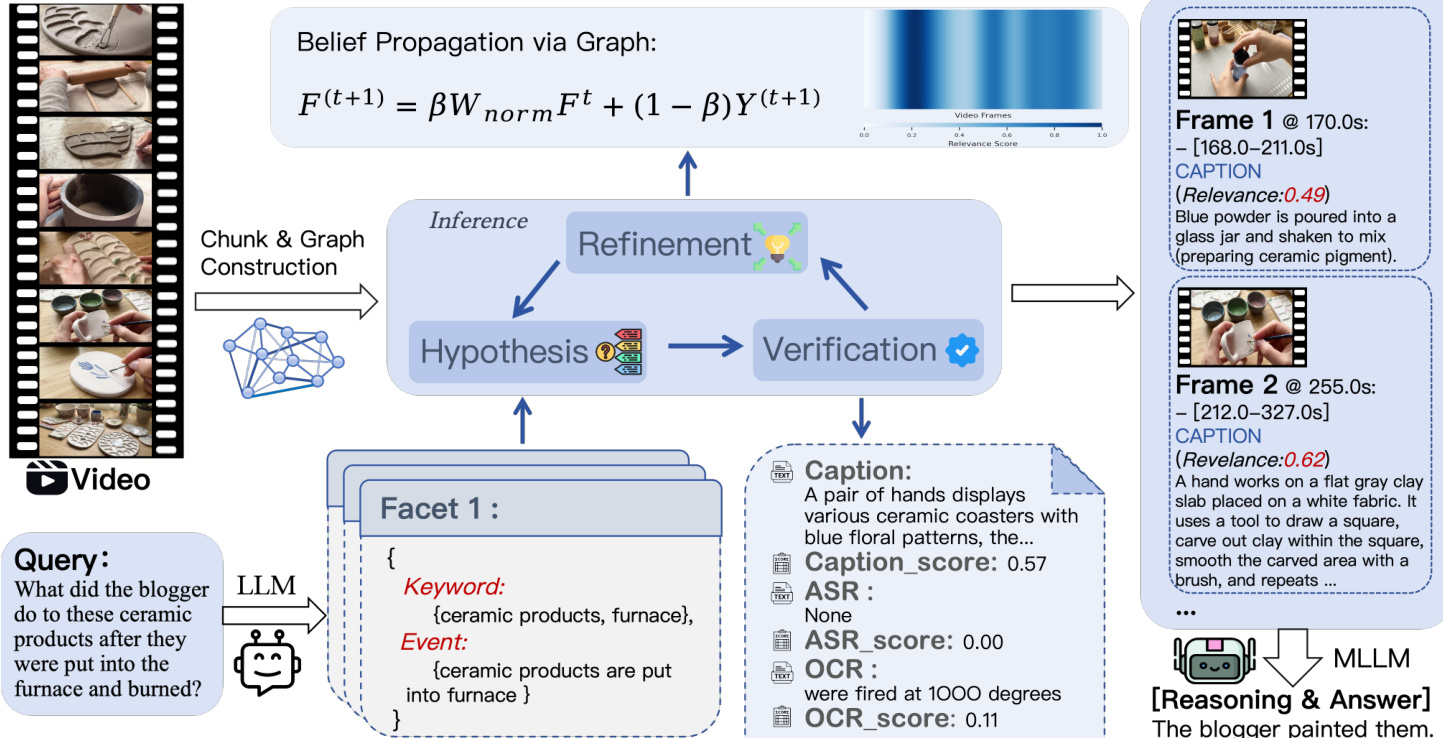

The authors propose VideoDetective, an inference framework that formulates long-video question answering as an iterative relevance state estimation problem on a visual-temporal affinity graph. The core objective is to efficiently combine extrinsic query relevance with intrinsic video correlations to localize query-related segments. The overall architecture consists of three main stages: Graph Construction, a Hypothesis-Verification-Refinement Iteration loop, and final Inference.

To model the continuous global belief field from sparse segment observations, the method first constructs a Visual-Temporal Affinity Graph. The video is divided into semantic segments based on visual similarity, where each segment serves as a node. The edges are defined by an affinity matrix that fuses visual similarity (cosine similarity of frame features) and temporal proximity (exponentially decaying kernel). This graph structure captures intrinsic associations, defining how relevance scores should propagate from observed anchor segments to unvisited ones.

The core of the framework is the Hypothesis-Verification-Refinement loop, which iteratively updates the relevance state. The system maintains two state vectors: an Injection Vector Y(t) representing sparse verified relevance scores, and a Belief Field F(t) representing the dense global relevance distribution inferred via graph diffusion.

In the Hypothesis phase, the user query is decomposed into semantic facets containing keywords and event descriptions. The system selects an anchor segment to verify. Initially, it uses Facet-Guided Initialization to find the best match. During iterations, it employs Informative Neighbor Exploration to select unvisited neighbors if evidence is missing, or Global Gap Filling to explore high-belief unvisited nodes if all facets are resolved.

Next, the Verification phase observes the selected anchor segment. The system extracts multi-source evidence including visual captions, on-screen text via OCR, and speech transcripts via ASR. A source-aware scoring mechanism computes the relevance score by combining lexical similarity (for precise text matching) and semantic similarity (for event understanding). This score is injected into the state vector Y(t).

Finally, the Refinement phase propagates the observed relevance scores across the graph to update the global belief field. This is achieved through iterative belief propagation, governed by the equation:

F(t+1)=βWnormFt+(1−β)Y(t+1)where Wnorm is the symmetric normalized affinity matrix and β balances smoothness and consistency. This process allows relevance signals to diffuse from sparse observations to the entire video structure.

Upon completion of the iterations, the converged global belief field serves as the final relevance distribution. The system applies Graph-NMS to select a diverse set of high-confidence segments, ensuring coverage of all query facets. These selected segments, along with their multimodal evidence, are packaged and fed into a downstream MLLM to generate the final answer.

Experiment

- Experiments on four long-video benchmarks validate that VideoDetective consistently outperforms proprietary and open-source baselines across various model scales, establishing new state-of-the-art results.

- Generalization tests confirm the framework acts as a plug-and-play solution that significantly boosts performance for diverse backbones without task-specific tuning.

- Ablation studies demonstrate that graph manifold propagation, semantic facet decomposition, and iterative hypothesis-verification loops are all essential components for reducing noise and correcting retrieval biases.

- Modality scaling analysis reveals that visual perception capabilities are the primary performance bottleneck, while the language model component requires only lightweight resources for effective query decomposition.

- Efficiency evaluations show that VideoDetective achieves superior accuracy with moderate token consumption, offering a better cost-effectiveness balance than both larger proprietary models and other method baselines.