Command Palette

Search for a command to run...

World Reasoning Arena

World Reasoning Arena

Abstract

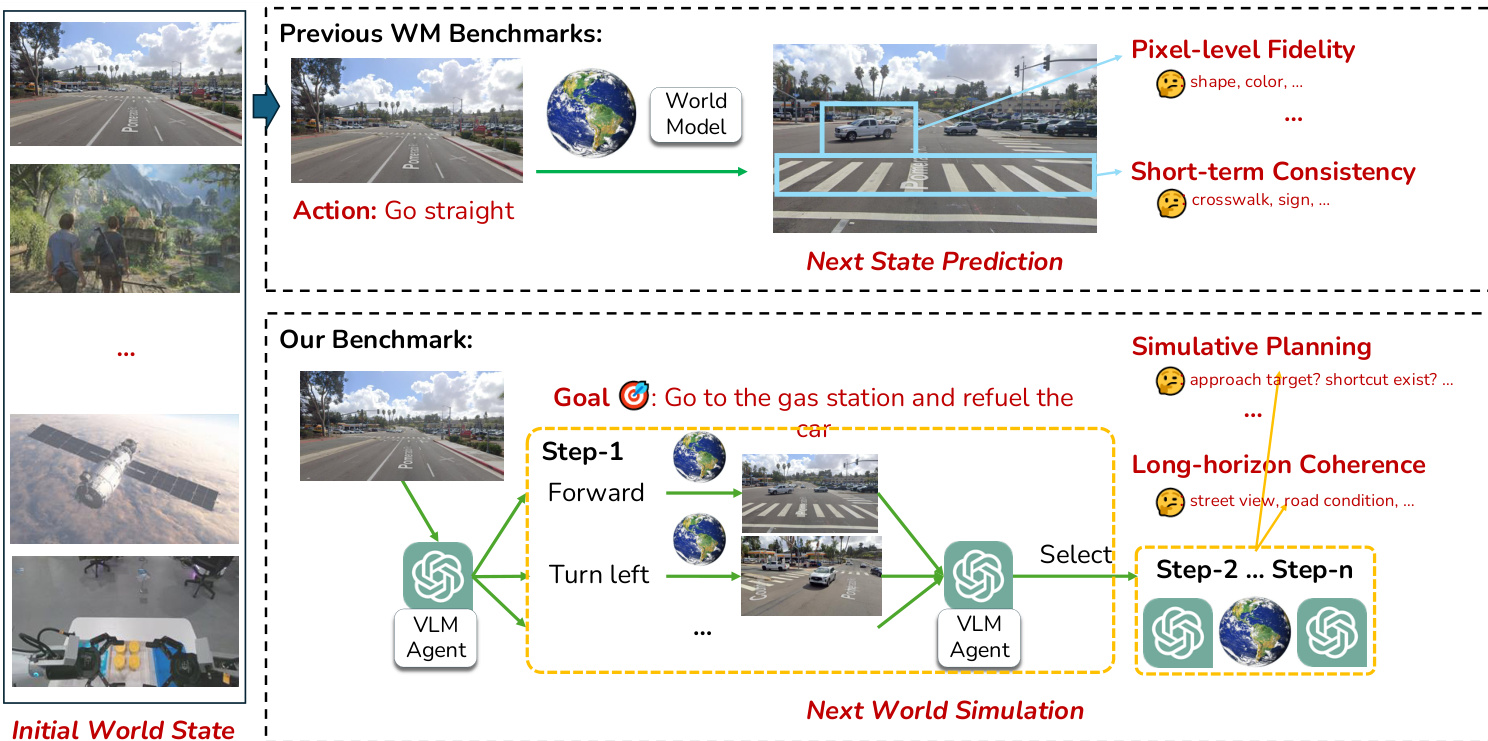

World models (WMs) are intended to serve as internal simulators of the real world that enable agents to understand, anticipate, and act upon complex environments. Existing WM benchmarks remain narrowly focused on next-state prediction and visual fidelity, overlooking the richer simulation capabilities required for intelligent behavior. To address this gap, we introduce WR-Arena, a comprehensive benchmark for evaluating WMs along three fundamental dimensions of next world simulation: (i) Action Simulation Fidelity, the ability to interpret and follow semantically meaningful, multi-step instructions and generate diverse counterfactual rollouts; (ii) Long-horizon Forecast, the ability to sustain accurate, coherent, and physically plausible simulations across extended interactions; and (iii) Simulative Reasoning and Planning, the ability to support goal-directed reasoning by simulating, comparing, and selecting among alternative futures in both structured and open-ended environments. We build a task taxonomy and curate diverse datasets designed to probe these capabilities, moving beyond single-turn and perceptual evaluations. Through extensive experiments with state-of-the-art WMs, our results expose a substantial gap between current models and human-level hypothetical reasoning, and establish WR-Arena as both a diagnostic tool and a guideline for advancing next-generation world models capable of robust understanding, forecasting, and purposeful action.

One-sentence Summary

To address the limitations of existing benchmarks focused on visual fidelity, the authors introduce WR-Arena, a comprehensive benchmark that evaluates world models across three fundamental dimensions: action simulation fidelity, long-horizon forecasting, and simulative reasoning and planning.

Key Contributions

- The paper introduces WR-Arena, a comprehensive benchmark designed to evaluate world models as internal simulators capable of supporting reasoning, long-range forecasting, and purposeful action.

- This work establishes a multi-dimensional evaluation framework that assesses action simulation fidelity, long-horizon forecasting, and simulative reasoning and planning through a curated task taxonomy and diverse datasets.

- Extensive experiments with state-of-the-art models demonstrate the benchmark's ability to diagnose significant gaps in instruction following and temporal consistency, providing a roadmap for developing next-generation world models.

Introduction

World models serve as internal simulators that allow intelligent agents to anticipate outcomes and perform mental thought experiments to guide decision making. This capability is critical for advancing embodied AI and autonomous systems that must navigate complex, unpredictable environments. However, existing benchmarks primarily focus on short term next state prediction and visual fidelity, which fails to measure whether a model can maintain physical consistency or support long horizon reasoning. The authors introduce WR-Arena, a comprehensive benchmark designed to evaluate world models across three advanced dimensions: action simulation fidelity, long horizon forecasting, and simulative reasoning and planning.

Method

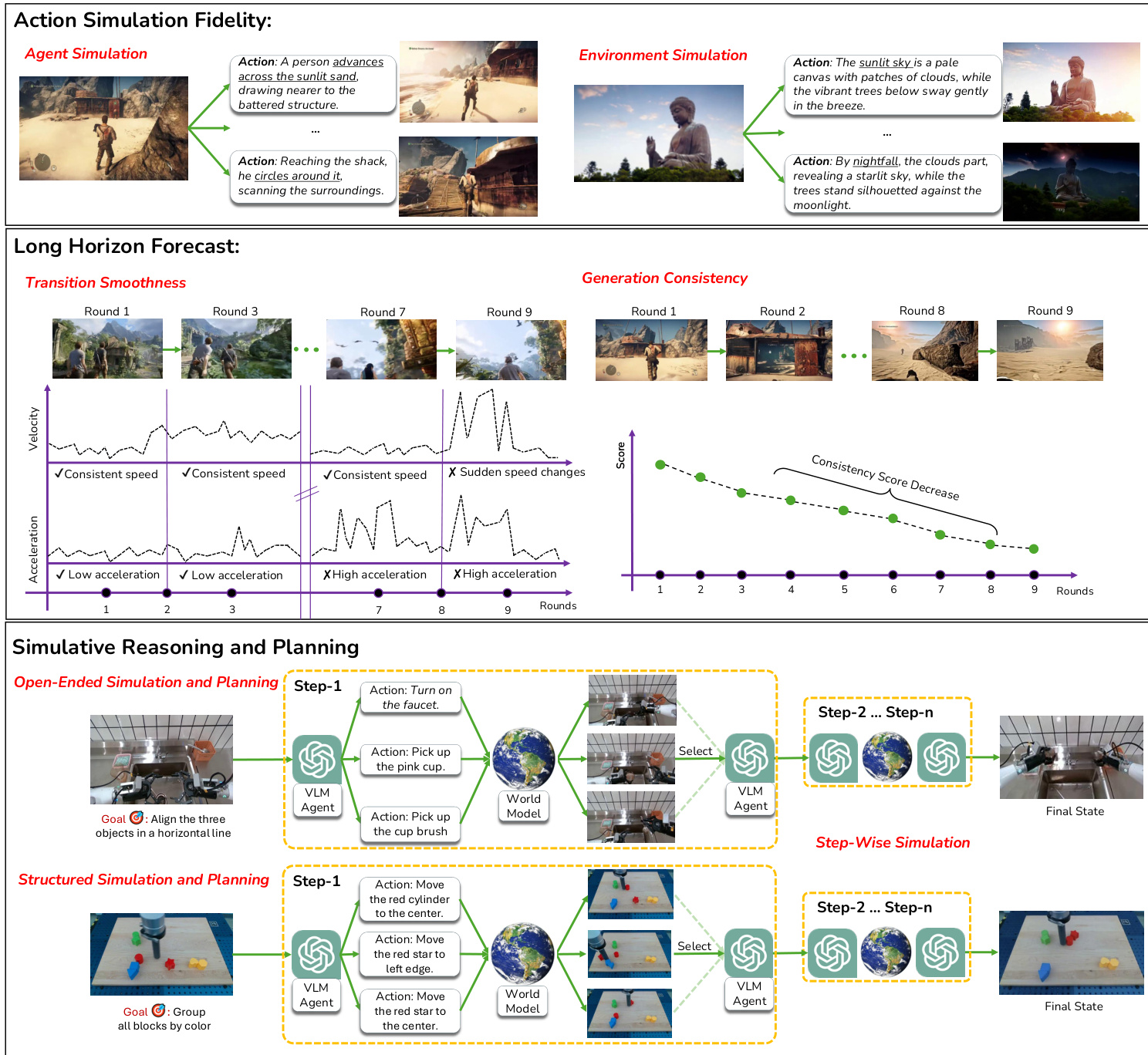

The authors propose an evaluation framework centered on Action Simulation Fidelity, which measures a world model's ability to accurately follow multi-step natural language instructions. This property assesses whether a model can generate a sequence of reasonable states that faithfully adhere to high-level control instructions, such as complex tasks involving multiple semantic steps.

The core methodology begins with an initial world state s0. To facilitate evaluation, the authors utilize a Large Language Model (LLM) to propose several multi-step high-level action sequences A=⟨a1,…,an⟩. These sequences are generated under specific feasibility constraints to ensure they are non-contradictory and causally applicable. Once the sequences are defined, the world model performs a rollout R(s0,A)=⟨s1,…,sT⟩, producing a sequence of states conditioned on the provided actions.

As shown in the figure below:

The proposed benchmark distinguishes itself from previous world model evaluations by moving beyond simple pixel-level fidelity or short-term consistency toward simulating complex, long-horizon planning and reasoning tasks.

The proposed benchmark distinguishes itself from previous world model evaluations by moving beyond simple pixel-level fidelity or short-term consistency toward simulating complex, long-horizon planning and reasoning tasks.

The evaluation is bifurcated into two distinct settings: Agent Simulation and Environment Simulation. In Agent Simulation, the goal is to determine if the model can drive a controllable entity through intended behaviors while maintaining stable background dynamics. By sampling multiple distinct action sequences A for a single s0, the authors induce counterfactual futures to verify if the model produces appropriately diverse yet coherent outcomes.

Conversely, Environment Simulation focuses on the model's ability to apply high-level scene interventions and simulate their causal consequences while the agent's policy remains neutral. This setting tests whether scene-level actions result in visually verifiable and predictable downstream effects.

To quantify the quality of these simulations, the authors employ vision-language models (VLMs) as judges. These judges score the generated rollouts based on two primary metrics: action faithfulness, which measures how well the simulation follows the instructions, and action precision, which assesses the accuracy of the resulting state changes.

Experiment

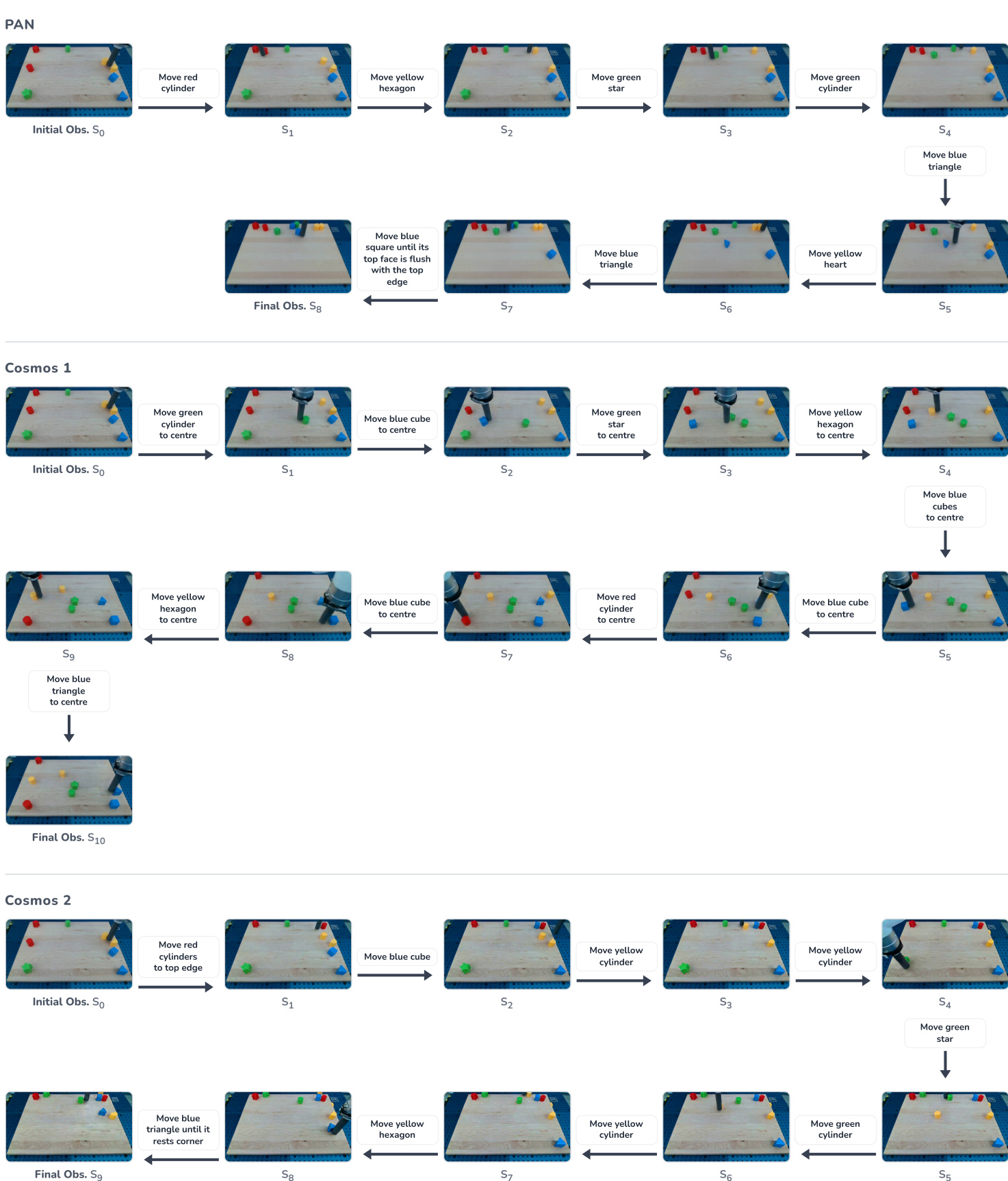

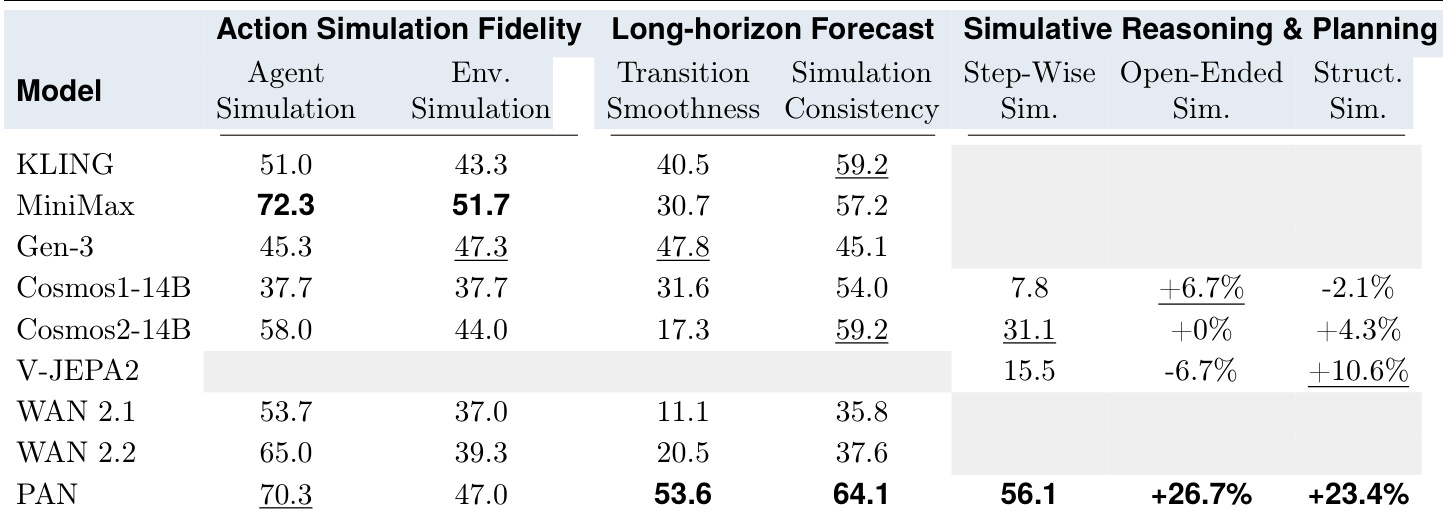

The evaluation assesses world models and video generators across three dimensions: action simulation fidelity, long-horizon forecasting, and simulative reasoning and planning. These experiments validate whether models can faithfully simulate environment changes, maintain temporal smoothness and consistency over extended sequences, and support goal-directed decision-making when integrated with a vision-language planner. While commercial video generators offer high perceptual quality, they often struggle with domain adaptation and long-term stability, whereas the PAN model demonstrates a more balanced ability to maintain semantic grounding and mitigate error accumulation during multi-step rollouts.

The authors evaluate various world models and video generators across three dimensions: action simulation fidelity, long-horizon forecasting, and simulative reasoning and planning. The results indicate that while different models excel in specific areas, maintaining consistency and smoothness over long sequences remains a significant challenge for all tested systems. PAN achieves the highest scores in transition smoothness and simulation consistency during long-horizon forecasting. MiniMax demonstrates strong performance in action simulation fidelity for both agent and environment-centric tasks. PAN provides the most substantial improvements in trajectory-level success for both open-ended and structured simulative planning tasks.

The authors evaluate several world models and video generators based on their action simulation fidelity, long-horizon forecasting capabilities, and simulative reasoning and planning abilities. While MiniMax shows strength in action simulation fidelity for various tasks, PAN demonstrates superior performance in maintaining transition smoothness, simulation consistency, and trajectory-level success during planning. Overall, the experiments reveal that while different models excel in specific dimensions, achieving consistency and smoothness over long sequences remains a persistent challenge for all evaluated systems.