Command Palette

Search for a command to run...

Two-Stage Acoustic Adaptation with Gated Cross-Attention Adapters for LLM-Based Multi-Talker Speech Recognition

Two-Stage Acoustic Adaptation with Gated Cross-Attention Adapters for LLM-Based Multi-Talker Speech Recognition

Hao Shi Yuan Gao Xugang Lu Tatsuya Kawahara

Abstract

Large Language Models (LLMs) are strong decoders for Serialized Output Training (SOT) in two-talker Automatic Speech Recognition (ASR), yet their performance degrades substantially in challenging conditions such as three-talker mixtures. A key limitation is that current systems inject acoustic evidence only through a projected prefix, which can be lossy and imperfectly aligned with the LLM input space, providing insufficient fine-grained grounding during decoding. Addressing this limitation is crucial for robust multi-talker ASR, especially in three-talker mixtures. This paper improves LLM-based multi-talker ASR by explicitly injecting talker-aware acoustic evidence into the decoder. We first revisit Connectionist Temporal Classification (CTC)-derived prefix prompting and compare three variants with increasing acoustic content. The CTC information is obtained using the serialized CTC proposed in our previous works. While acoustic-enriched prompts outperform the SOT-only baseline, prefix-only conditioning remains inadequate for three-talker mixtures. We therefore propose a lightweight gated residual cross-attention adapter and design a two-stage acoustic adaptation framework based on low-rank updates (LoRA). In Stage 1, we insert gated cross-attention adapters after the self-attention sub-layer to stably inject acoustic embeddings as external memory. In Stage 2, we refine both the cross-attention adapters and the pretrained LLM's self-attention projections using parameter-efficient LoRA, improving robustness for large backbones under limited data; the learned updates are merged into the base weights for inference. Experiments on Libri2Mix/Libri3Mix under clean and noisy conditions show consistent gains, with particularly large improvements in three-talker settings.

One-sentence Summary

Authors from IEEE-affiliated institutions propose a two-stage acoustic adaptation framework for LLM-based multi-talker ASR that injects talker-aware evidence via gated cross-attention adapters and LoRA refinement, significantly improving robustness in challenging three-talker mixtures where standard prefix prompting fails.

Key Contributions

- The paper introduces a systematic comparison of three Connectionist Temporal Classification (CTC)-derived prefix variants with increasing acoustic content to evaluate their effectiveness in providing explicit guidance for Large Language Model (LLM) decoders in multi-talker settings.

- A lightweight gated residual cross-attention adapter is proposed to inject talker-aware acoustic embeddings as external memory after the self-attention sub-layer, enabling dynamic access to fine-grained acoustic evidence at every decoding step.

- A two-stage acoustic adaptation framework utilizing low-rank updates (LoRA) is presented to refine both the cross-attention adapters and pretrained LLM self-attention projections, with experiments on Libri2Mix and Libri3Mix demonstrating consistent performance gains, particularly in challenging three-talker mixtures.

Introduction

Large Language Models (LLMs) serve as powerful decoders for Serialized Output Training in multi-talker Automatic Speech Recognition, yet their performance drops significantly when handling complex three-talker mixtures. Prior approaches rely on projecting acoustic evidence into a static prefix, which often results in lossy representations that fail to provide the fine-grained grounding needed to disentangle densely interleaved speech streams. To address this, the authors propose a two-stage acoustic adaptation framework that injects talker-aware acoustic evidence directly into the LLM decoder using a lightweight gated residual cross-attention adapter. They further refine both the adapter and the LLM's self-attention projections with parameter-efficient LoRA updates, ensuring stable training and robust performance even under limited data conditions.

Dataset

-

Dataset Composition and Sources: The authors evaluate their models on LibriMix, a benchmark for overlapped-speech recognition built upon the LibriSpeech corpus. Additive noise for noisy conditions is sampled from the WHAM! corpus.

-

Subset Details:

- Libri2Mix: Synthesized from the train-clean-100, train-clean-360, dev-clean, and test-clean subsets of LibriSpeech using official scripts and standard ESPnet offset settings for two-talker configurations. The training set contains approximately 270 hours of speech, while the development and test sets each contain about 11 hours.

- Libri3Mix: Generated using custom offset files to ensure diverse onset-time configurations for three-talker mixtures. The training set comprises approximately 186 hours of speech, with development and test sets holding about 11 hours each.

-

Usage in Model Training: The authors follow the official LibriMix protocol to generate both Libri2Mix and Libri3Mix mixtures. These datasets serve as the primary evaluation benchmark, with the training splits used for model optimization and the development and test splits for performance assessment.

-

Processing and Metadata: The team utilized official LibriMix scripts to synthesize clean mixtures and applied specific offset files to control talker onset times. While standard settings were used for two-talker scenarios, custom offset files were constructed for three-talker mixtures to increase configuration diversity, with these files scheduled for release after the review period.

Method

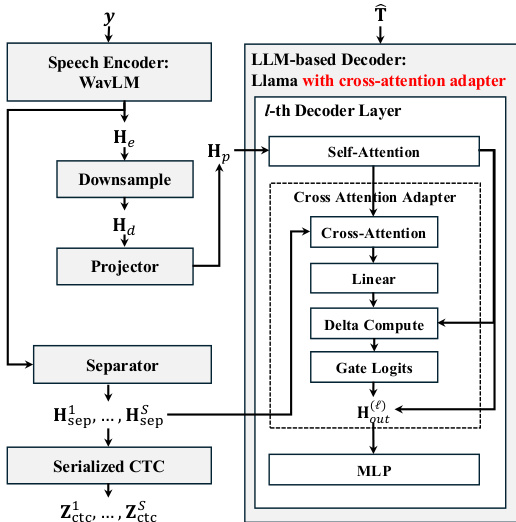

The authors propose a framework for LLM-based multi-talker ASR that integrates acoustic evidence directly into the decoder. The system consists of a speech encoder, a separator for talker-specific streams, and an LLM decoder enhanced with a cross-attention adapter.

Refer to the framework diagram for the overall architecture. The input waveform y is processed by a Speech Encoder (WavLM) to produce frame-level representations He. These features undergo temporal reduction via downsampling to Hd and are projected to the LLM hidden dimension Hp. Simultaneously, a Separator module processes the encoder output to generate S talker-specific streams Hsep1,…,HsepS, which are also used for Serialized CTC supervision.

The LLM-based Decoder, based on Llama, incorporates a specialized Cross Attention Adapter within each decoder layer. As shown in the figure below, the adapter is inserted after the Self-Attention sub-layer. It takes the hidden states from self-attention as queries and the projected acoustic memory as keys and values. The adapter computes a context vector which is then processed through a Linear layer and a Delta Compute block. A Gate Logits mechanism controls the residual update, ensuring that the acoustic information is injected without disrupting the pre-trained language representations.

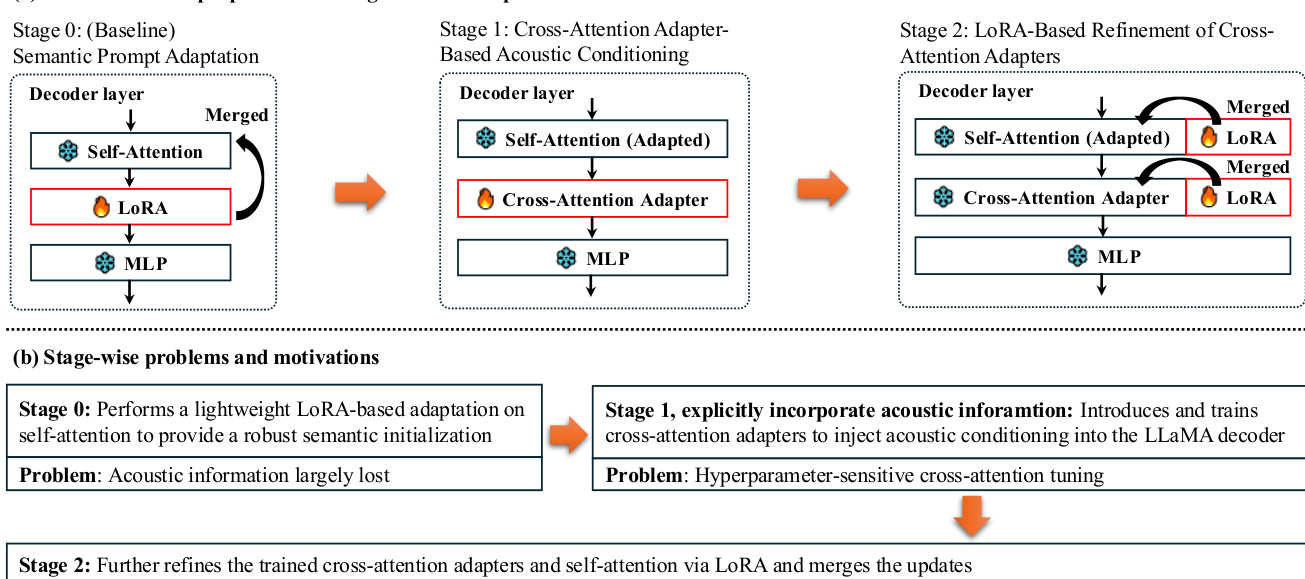

The training process follows a two-stage adaptation strategy designed to balance semantic initialization with robust acoustic conditioning. The pipeline and motivations for each stage are illustrated in the figure below.

Stage 0 serves as a baseline where the LLM decoder is adapted using LoRA on the self-attention projections. This provides a robust semantic initialization without explicit acoustic injection. Stage 1 introduces the gated cross-attention adapter to explicitly incorporate acoustic information. This stage trains the adapter to inject talker-aware acoustic evidence into the decoder via a gated residual update. However, training these adapters can be hyperparameter-sensitive. To address this, Stage 2 applies LoRA-based refinement to both the cross-attention adapters and the self-attention projections. This refinement strengthens the adaptation capacity and improves robustness under limited data. Finally, the learned low-rank updates are merged into the base weights, resulting in a model with no additional parameters at inference time.

Experiment

- LLM-based systems significantly improve performance on two-talker mixtures by leveraging semantic priors but struggle with three-talker scenarios due to insufficient context resolution in static prefix conditioning.

- Acoustic-rich prefixes outperform text-only prompts by providing better constraints for LLM decoding, yet one-shot prefixing alone remains inadequate for fine-grained talker assignment in heavily overlapped speech.

- Decoder-side acoustic injection via cross-attention yields substantial gains in three-talker conditions by enabling step-wise access to acoustic memory, whereas naive stacked cross-attention can degrade performance in easier two-talker settings due to over-conditioning.

- Gated cross-attention adaptation offers more stable and effective acoustic conditioning than naive stacking by regulating injection levels and preserving pretrained language representations, though it still lags behind serialized CTC alignment in the most challenging regimes.

- Stage-2 LoRA refinement enhances robustness and reduces hyperparameter sensitivity, with joint refinement of cross-attention adapters and self-attention projections consistently delivering the best overall results, particularly for 3B-scale backbones.

- The proposed method achieves a clear advantage over existing pipelines in three-talker settings, and larger LLM decoders consistently outperform models trained from scratch even when using serialized CTC references.