Command Palette

Search for a command to run...

Generative World Renderer

Generative World Renderer

Zheng-Hui Huang Zhixiang Wang Jiaming Tan Ruihan Yu Yidan Zhang Bo Zheng Yu-Lun Liu Yung-Yu Chuang Kaipeng Zhang

Abstract

Scaling generative inverse and forward rendering to real-world scenarios is bottlenecked by the limited realism and temporal coherence of existing synthetic datasets. To bridge this persistent domain gap, we introduce a large-scale, dynamic dataset curated from visually complex AAA games. Using a novel dual-screen stitched capture method, we extracted 4M continuous frames (720p/30 FPS) of synchronized RGB and five G-buffer channels across diverse scenes, visual effects, and environments, including adverse weather and motion-blur variants. This dataset uniquely advances bidirectional rendering: enabling robust in-the-wild geometry and material decomposition, and facilitating high-fidelity G-buffer-guided video generation. Furthermore, to evaluate the real-world performance of inverse rendering without ground truth, we propose a novel VLM-based assessment protocol measuring semantic, spatial, and temporal consistency. Experiments demonstrate that inverse renderers fine-tuned on our data achieve superior cross-dataset generalization and controllable generation, while our VLM evaluation strongly correlates with human judgment. Combined with our toolkit, our forward renderer enables users to edit styles of AAA games from G-buffers using text prompts.

One-sentence Summary

Researchers from Alaya Studio and multiple universities introduce a large-scale dataset from AAA games to bridge the realism gap in bidirectional rendering. By providing synchronized RGB and G-buffer frames, this work enables robust inverse rendering and high-fidelity G-buffer-guided video generation, surpassing prior methods limited by synthetic data scarcity.

Key Contributions

- The paper introduces a scalable G-buffer acquisition pipeline that renders multi-channel data to a unified canvas via hardware-accelerated capture, enabling temporally synchronized recording without modifying the game engine.

- A fine-tuned video inverse rendering model is presented that leverages motion-augmented training on game data to achieve state-of-the-art accuracy in depth, normal, albedo, and material parameter estimation on both synthetic and real-world benchmarks.

- The work demonstrates a practical game editing application by adapting a text-to-video model to accept G-buffers as conditional inputs, allowing users to manipulate lighting and environmental effects through text prompts during inference.

Introduction

No source text was provided to summarize. Please supply the abstract or body snippet of the research paper so I can generate the background summary with the required technical context, limitations, and contributions.

Dataset

-

Dataset Composition and Sources The authors curate a large-scale, dynamic dataset from two visually complex AAA games: Cyberpunk 2077 and Black Myth: Wukong. This collection bridges the domain gap between synthetic and real-world data by providing 4 million continuous frames at 720p resolution and 30 FPS. The dataset uniquely pairs synchronized RGB video with five high-fidelity G-buffer channels (depth, normals, albedo, metallic, and roughness) across diverse environments, including urban and natural scenes under varying weather conditions like rain, fog, and snow.

-

Key Details for Each Subset

- Cyberpunk 2077 Subset: Captured using semi-automated driving setups with long-range waypoints to generate continuous trajectories with variable speeds, alongside walking sequences for indoor coverage. This subset features a higher proportion of metallic surfaces and balanced luminance, reflecting its urban, metal-rich themes.

- Black Myth: Wukong Subset: Derived from exploration sequences in completed save files, deliberately avoiding combat to focus on diverse environmental traversal. This subset contains more high-roughness regions and lower luminance values, consistent with its natural, shadowed outdoor settings.

- Filtering Rules: The authors exclude clips where both the scene content and camera remain static throughout the sequence. Frames with excessively low luminance are also removed to ensure quality.

-

Data Usage in the Model The dataset serves as the primary supervision signal for training bidirectional rendering models, specifically fine-tuning Diffusion-based architectures for both inverse rendering (material decomposition) and forward rendering (G-buffer-guided video generation). The authors utilize the data to improve cross-dataset generalization and temporal coherence, enabling models to handle long-tail complexities such as volumetric effects and rapid motion. A novel VLM-based evaluation protocol is employed to assess semantic, spatial, and temporal consistency where traditional metrics fall short.

-

Processing and Construction Strategies

- Capture Pipeline: The team uses a non-intrusive dual-screen stitched capture method that intercepts the rendering pipeline at the graphics API level via ReShade, avoiding decompilation or asset extraction.

- Normal Reconstruction: Since only world-space normals are reliably available, the authors reconstruct camera-space normals from the depth buffer using inverse projection and finite differences.

- Channel Decoupling: To prevent compression artifacts, material channels like metallic and roughness are decoupled and rendered into spatially distinct screen regions before capture.

- Motion Blur Synthesis: While the engine captures sharp canonical RGB frames, the authors synthesize motion-blurred variants offline using RIFE for frame interpolation and linear domain averaging to better match real-world imaging conditions.

- Metadata Annotation: An LLM (Qwen3-VL) analyzes sampled frames to generate categorical labels for texture, weather, scene type (indoor/outdoor), and motion dynamics for each clip.

Method

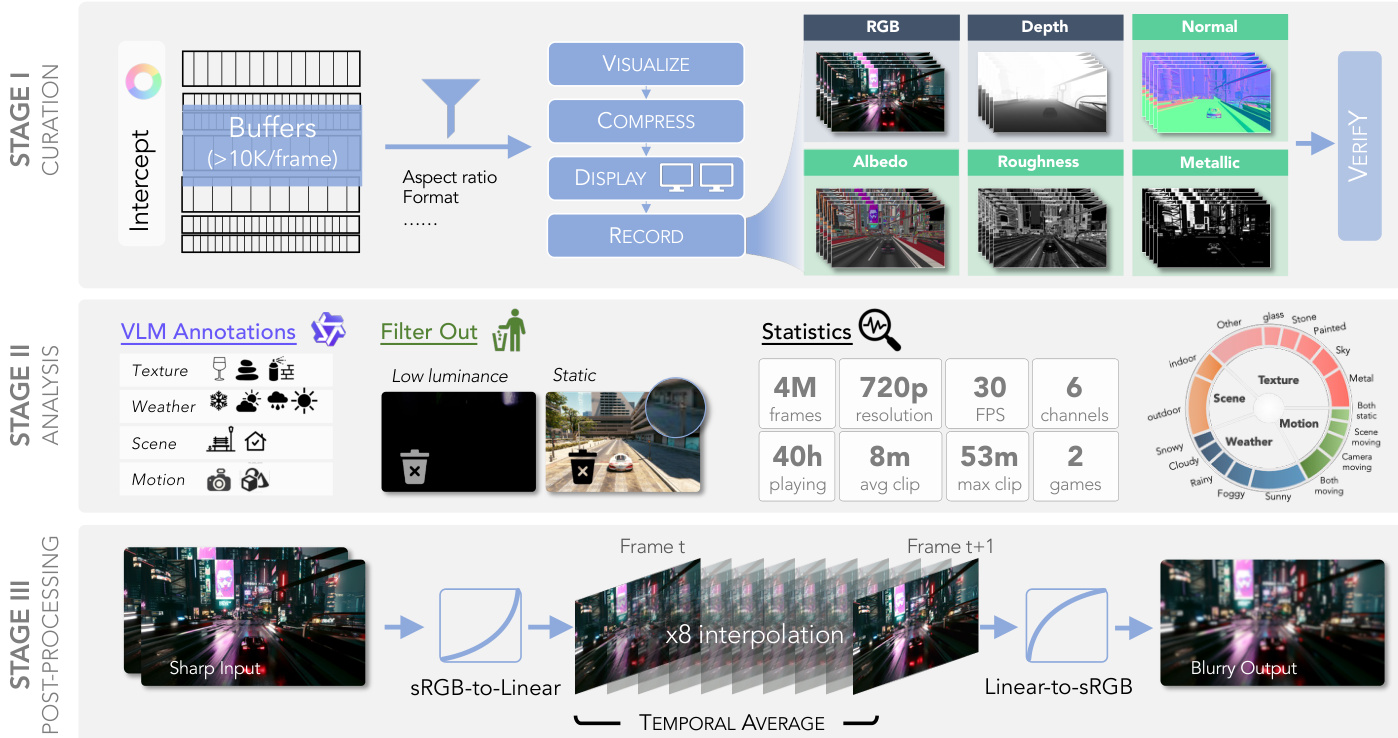

The proposed framework consists of three distinct stages: Curation, Analysis, and Post-Processing. In the initial Curation stage, the authors address the high cost of directly exporting multi-channel G-buffers by implementing a synchronized multi-screen recording strategy. Instead of relying on expensive storage bandwidth or GPU-to-CPU readback, the system shades target buffers to the screen and records them via hardware-accelerated capture. To maintain strict temporal synchronization across all six channels, a mosaic compositing strategy is employed where buffers are rendered onto a unified canvas.

As illustrated in the framework diagram, this process allows for the capture of RGB, Depth, Normal, Albedo, Roughness, and Metallic channels simultaneously. A verification step follows, where metallic maps are evaluated based on semantic correctness, appearance quality, and temporal consistency to ensure material plausibility. To overcome display resolution limits, the setup stitches two 2K monitors, enabling recording at an effective 720p resolution per channel while preserving the intended aspect ratio through center-cropping.

The pipeline then proceeds to the Analysis stage, where data quality is ensured through automated filtering and annotation. VLMs are leveraged to annotate frames based on texture, weather, scene, and motion attributes. Frames exhibiting low luminance or static content are filtered out to maintain dataset diversity and quality. Statistical analysis confirms the collection of 4M frames at 30 FPS, covering various environmental conditions and motion types.

Finally, the Post-Processing stage generates motion blur effects to simulate real-world camera capture. The process begins with converting sharp input frames from sRGB to linear space. The system then performs 8x interpolation between consecutive frames, denoted as Frame t and Frame t+1, followed by a temporal average operation. The resulting data is converted back from linear to sRGB space to produce the final blurry output, effectively synthesizing motion blur without requiring physical camera movement.

Experiment

- Real-scene evaluation using Vision-Language Models (VLMs) validates that the proposed dataset improves generalization for material prediction in complex, ground-truth-free environments by leveraging global context and temporal reasoning.

- Quantitative benchmarks on synthetic datasets (Black Myth: Wukong and Sintel) demonstrate that fine-tuning on the new dataset yields superior performance in depth, normal, and albedo estimation compared to existing DiffusionRenderer baselines.

- Qualitative analysis confirms the method effectively disentangles intrinsic scene properties, producing cleaner albedo maps and robust material predictions that resist atmospheric disruptions like smoke and volumetric scattering.

- Ablation studies reveal that motion augmentation during training significantly enhances temporal stability and reduces artifacts in videos with strong motion blur.

- Relighting experiments show that the improved G-buffers enable off-the-shelf forward renderers to generate illumination-consistent novel views, proving the efficacy of the data-centric approach.

- Game editing evaluations indicate that using high-quality G-buffers as conditional inputs achieves a better balance between editability and visual fidelity than RGB-derived or stochastic editing baselines, allowing for seamless integration of complex atmospheric effects.