Command Palette

Search for a command to run...

OpenGame: Open Agentic Coding for Games

OpenGame: Open Agentic Coding for Games

Abstract

Game development sits at the intersection of creative design and intricate software engineering, demanding the joint orchestration of game engines, real-time loops, and tightly coupled state across many files. While Large Language Models (LLMs) and code agents now solve isolated programming tasks with ease, they consistently stumble when asked to produce a fully playable game from a high-level design, collapsing under cross-file inconsistencies, broken scene wiring, and logical incoherence. We bridge this gap with OpenGame, the first open-source agentic framework explicitly designed for end-to-end web game creation. At its core lies Game Skill, a reusable, evolving capability composed of a Template Skill that grows a library of project skeletons from experience and a Debug Skill that maintains a living protocol of verified fixes - together enabling the agent to scaffold stable architectures and systematically repair integration errors rather than patch isolated syntax bugs. Powering this framework is GameCoder-27B, a code LLM specialized for game engine mastery through a three-stage pipeline of continual pre-training, supervised fine-tuning, and execution-grounded reinforcement learning. Since verifying interactive playability is fundamentally harder than checking static code, we further introduce OpenGame-Bench, an evaluation pipeline that scores agentic game generation along Build Health, Visual Usability, and Intent Alignment via headless browser execution and VLM judging. Across 150 diverse game prompts, OpenGame establishes a new state-of-the-art. We hope OpenGame pushes code agents beyond discrete software engineering problems and toward building complex, interactive real-world applications. Our framework will be fully open-sourced.

One-sentence Summary

Researchers propose OpenGame, the first open-source agentic framework designed for end-to-end web game creation, which utilizes Game Skill to integrate a growing library of project templates with a systematic debugging protocol to overcome cross-file inconsistencies and logical incoherence.

Key Contributions

- The paper introduces OpenGame, an open-source agentic framework designed for end-to-end web game creation from natural-language specifications using the Phaser engine.

- This work presents the Game Skill mechanism, which utilizes a Template Skill for stable project scaffolding and a Debug Skill for cumulative error repair to resolve cross-file inconsistencies.

- The authors develop GameCoder-27B, a domain-specialized foundation model, and OpenGame-Bench, a dynamic evaluation pipeline that measures build health, visual usability, and intent alignment.

Introduction

Automated game development requires the complex orchestration of real-time loops, physics, and tightly coupled state across multiple files. While general-purpose Large Language Models can solve isolated programming tasks, they often fail at end-to-end game creation due to logical incoherence, engine-specific knowledge gaps, and cross-file inconsistencies. The authors leverage these challenges to introduce OpenGame, an open-source agentic framework designed for end-to-end web game creation. Their contribution includes the Game Skill capability, which utilizes evolving templates and a living debugging protocol to stabilize project architecture, a domain-specialized model named GameCoder-27B, and OpenGame-Bench, a new evaluation pipeline that assesses dynamic playability rather than just static code correctness.

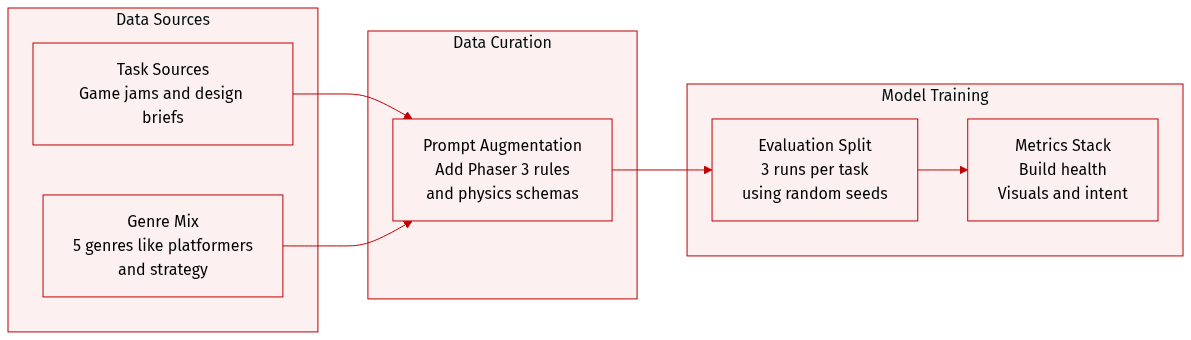

Dataset

The authors introduce OpenGame-Bench, a benchmark designed to evaluate AI agents in multi-file game development. The dataset details are as follows:

- Dataset Composition and Sources: The benchmark consists of 150 unique tasks derived from natural language prompts. These prompts are sourced from curated public game-jam repositories and AI-assisted design briefs. The tasks are manually verified to ensure they are technically achievable within 2D web frameworks.

- Task Diversity and Subsets: The 150 tasks span five distinct game genres: platformers, top-down shooters, puzzle games, arcade classics, and strategy. The tasks are categorized by logic types, such as grid-based movement (e.g., Sokoban or Chess) and UI-heavy interactions (e.g., card games or visual novels).

- Processing and Prompt Augmentation: To prevent models from defaulting to single-file implementations and to test structural agentic capabilities, the authors augment all baseline prompts with an explicit instruction to use the Phaser 3 framework. For certain archetypes like platformers, the authors utilize structured metadata, including ASCII level design legends and specific physics and behavior schemas.

- Evaluation and Metrics: The authors use an engine-agnostic evaluation layer that runs via a headless browser. A successful run must build correctly, serve via a local HTTP server without fatal errors, and produce at least one non-empty screenshot. Performance is measured across three dimensions: Build Health (compilation and runtime stability), Visual Usability (visual coherence and animation), and Intent Alignment (adherence to requirements as judged by a Vision-Language Model). To account for stochasticity, each task is evaluated three times using different random seeds.

Method

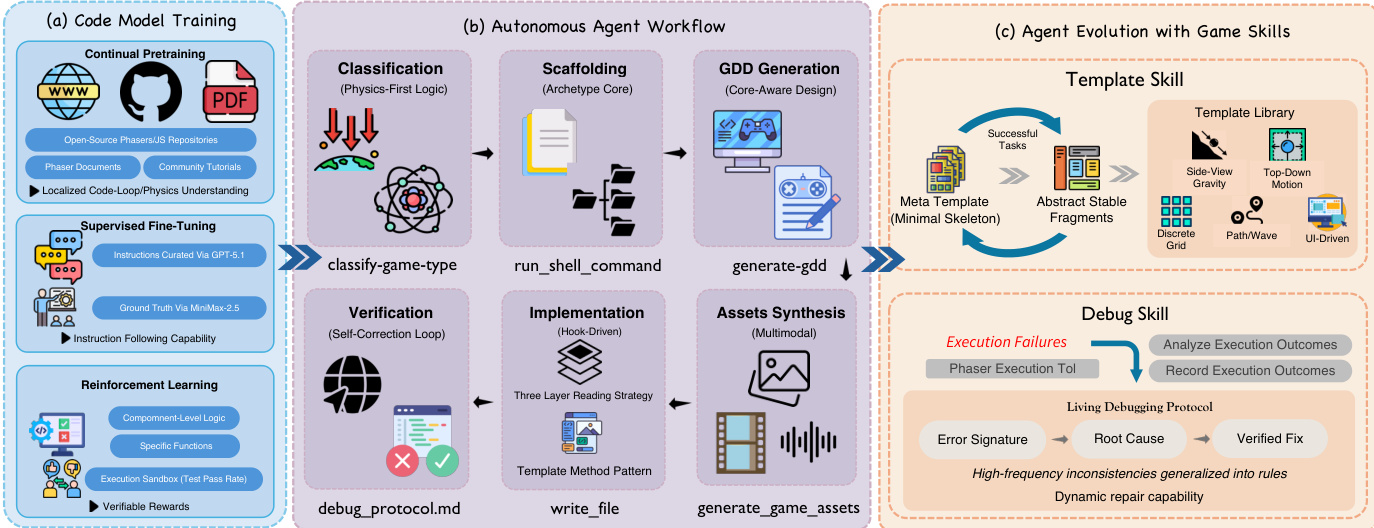

The OpenGame framework integrates a domain-specialized code model with a structured multimodal coding agent to enable autonomous game generation. The overall architecture is composed of three interconnected components: a multi-stage code-model training pipeline, an autonomous agent workflow, and an agent-evolution module that refines reusable game-development skills. The training pipeline establishes engine-specific priors for the base model, while the agent workflow translates natural-language game ideas into runnable projects through a six-phase process, and the evolution module continuously improves structural scaffolding and repair behavior using accumulated experience.

The base model, GameCoder-27B, is built upon a Qwen3.5-27B backbone and is trained through a three-stage pipeline to acquire domain-specific knowledge for interactive web game development. The first stage, Continual Pre-Training (CPT), adapts the model to the domain by assembling a large-scale corpus from open-source Phaser and JavaScript/TypeScript game repositories, along with official documentation and community tutorials. This stage establishes a strong prior over game loops, physics systems, asset usage, and state management patterns. The second stage, Supervised Fine-Tuning (SFT), aligns the model with instruction-following by synthesizing a diverse question-answer dataset using gpt-codex5.1 for complex game design prompts and minimax2.5 to produce high-quality target solutions. This synthetic distillation teaches the model to convert abstract creative intent into concrete code structure. The final stage, Reinforcement Learning (RL), refines code generation by applying execution-based feedback at the component level. The model synthesizes single-file gameplay logic and targeted functional modules, which are evaluated against predefined unit tests. The reward is computed from execution success and aggregate test pass rate, grounding the model in deterministic, executable logic before the downstream agent assembles these building blocks into a full multi-file project.

The autonomous agent workflow orchestrates the generation process through six operational phases: initialization and classification, scaffolding, design generation, asset synthesis, code implementation, and verification. The workflow begins with initialization and classification, where the agent invokes the classify-game-type tool to interpret the user's natural-language request. This tool applies a Physics-First Classification rule, categorizing the task based on physical constraints and spatial mechanics rather than ambiguous genre labels, which establishes a macro-level execution plan. Following classification, the agent executes a scaffolding procedure using run_shell_command to copy the shared core, appropriate modules/{archetype} codebase, and relevant architectural documentation into the workspace, creating a stable structural baseline. The agent then invokes generate-gdd to produce a technical Game Design Document (GDD), dynamically loading archetype-specific API constraints from the scaffolded documentation to ensure feasibility. The implementation roadmap is extracted from the GDD and refined into granular, file-specific actions using the todo_write tool. In the asset synthesis phase, the agent reads asset_protocol.md to ensure parameter compliance and invokes generate-game-assets, leveraging multimodal generation models to synthesize backgrounds, character animations, static items, and audio assets from the GDD's asset registry. For tile-based games, generate-tilemap converts ASCII layouts into structured JSON tilemaps. The agent records the exact texture and asset keys required during implementation by reading the produced asset-pack.json, reducing downstream asset-reference hallucinations. Context-aware code implementation follows, where the agent merges GDD parameters into gameConfig.json to enforce a data-driven interface. To mitigate context overflow, a Three-Layer Reading Strategy is employed, progressively loading an API summary, the targeted source file, and the implementation guide. Code generation adheres to a Template Method Pattern, where the agent copies template files and overrides designated hook methods to inject game-specific logic while preserving the deterministic lifecycle management of the base classes. The final phase is verification and self-correction, where the agent performs a static self-review over common generative failure modes using debug_protocol.md, executes npm run build and npm run test under headless browser evaluation, and parses compiler output to localize and iteratively repair faulty scripts until a playable game is obtained.

The agent evolution module enhances the framework's capabilities through reusable game-development skills, specifically Template Skill and Debug Skill. Template Skill stabilizes project structure by maintaining an evolving template library L that grows from a minimal meta template M0 into specialized template families reflecting recurring physics and interaction regimes. For a new request, the agent selects an appropriate template family from L and instantiates it to obtain a stable project skeleton, introducing game-specific content through a limited set of extension points to reduce the search space and improve cross-file consistency. Debug Skill targets systematic failures by maintaining a living debugging protocol P that is updated from observed build, test, and runtime outcomes. Each time a failure occurs, the agent records a structured entry containing an error signature, root cause, and verified fix, which are added to P and reused in future tasks. The protocol includes lightweight pre-execution validations for high-frequency inconsistency classes, such as mismatched asset keys or invalid scene transitions, and generalizes recurring failure patterns into reusable rules. This cumulative and persistent debugging knowledge improves reliability over time without increasing prompt complexity. The overall execution of Game Skill, as summarized in Algorithm 1, combines these components: the agent selects a template family, instantiates a project skeleton, generates game-specific content, and iteratively verifies, diagnoses, and repairs the project until it becomes buildable and runnable, logging validated fixes back to the protocol.

Experiment

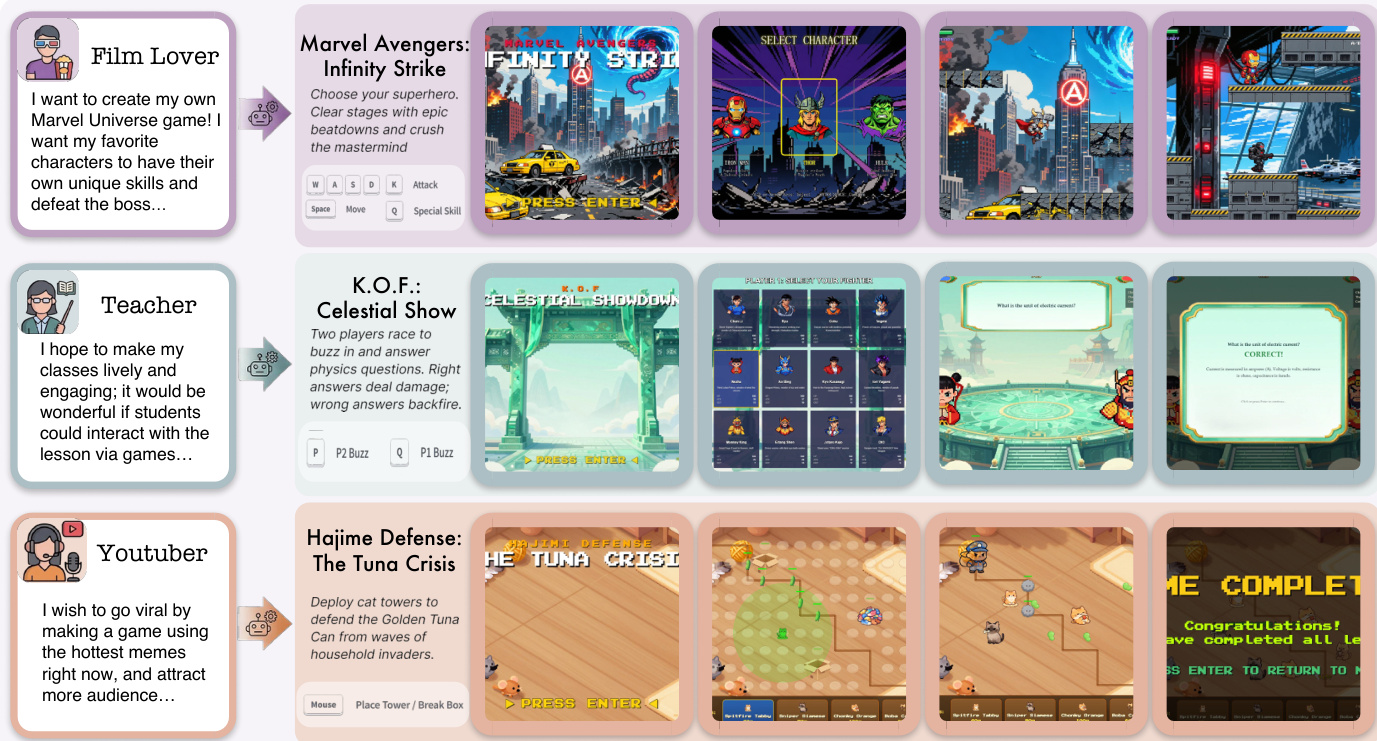

OpenGame is evaluated on a benchmark of 150 browser game tasks using an automated pipeline that measures build correctness, visual quality, and intent satisfaction. The results demonstrate that OpenGame establishes a new state of the art by outperforming strong direct LLM baselines and established agentic frameworks, particularly in preserving user-specified mechanics through its structured planning and iterative verification. Ablation studies reveal that the system's success stems from a combination of domain-specific model training, a template-driven agentic workflow, and an evolving library of specialized game skills that facilitate robust debugging and multi-file synthesis.

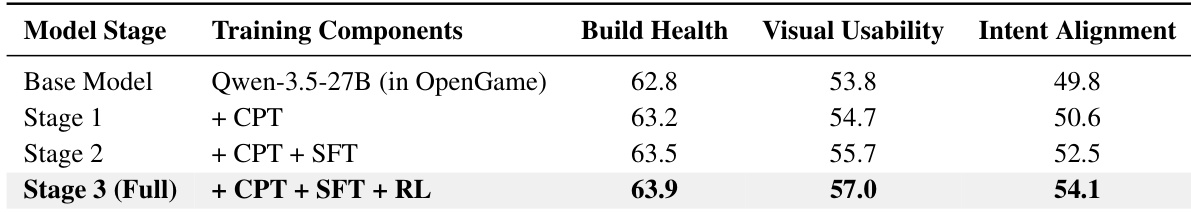

The authors conduct an ablation study to evaluate the contributions of different training components to the performance of the GameCoder-27B model within the OpenGame framework. The results show that each stage of training—Continual Pre-Training, Supervised Fine-Tuning, and Reinforcement Learning—adds incremental improvements across Build Health, Visual Usability, and Intent Alignment, with Supervised Fine-Tuning providing the largest boost to Intent Alignment. The final model achieves higher performance than the base model across all metrics, indicating that domain-specific training enhances the model's ability to generate functional and intent-aligned game code. Incremental training stages improve performance across all evaluation metrics. Supervised Fine-Tuning delivers the largest gain in Intent Alignment. The final model outperforms the base model in all metrics, demonstrating the value of domain-specific training.

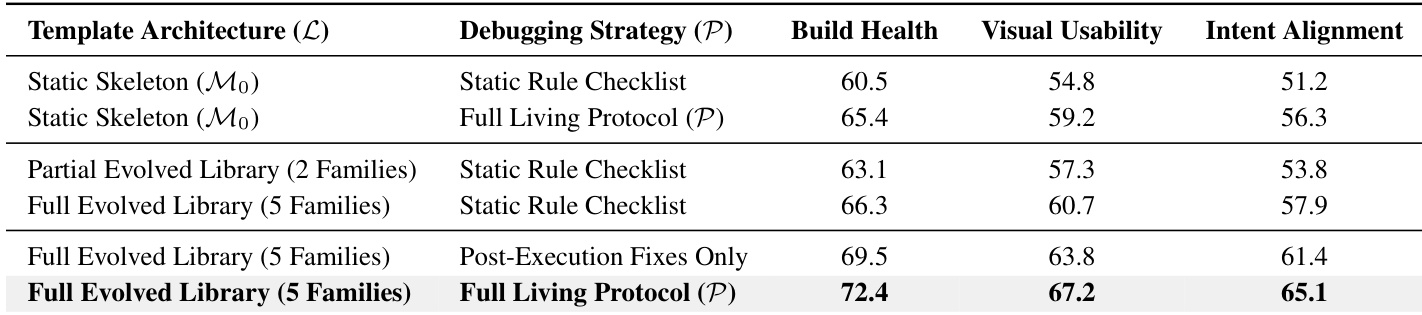

The authors conduct ablation studies to analyze the contributions of different components in the OpenGame framework, focusing on template architecture and debugging strategy. Results show that combining a full evolved library with a comprehensive debugging protocol leads to the highest performance across all metrics, demonstrating the importance of both structured scaffolding and iterative verification in game generation. Combining a full evolved library with a comprehensive debugging protocol achieves the highest performance across all metrics. Using a full evolved library significantly improves performance compared to a static skeleton or partial evolved library. The full living protocol outperforms static rule checklists and post-execution fixes, highlighting the importance of proactive debugging mechanisms.

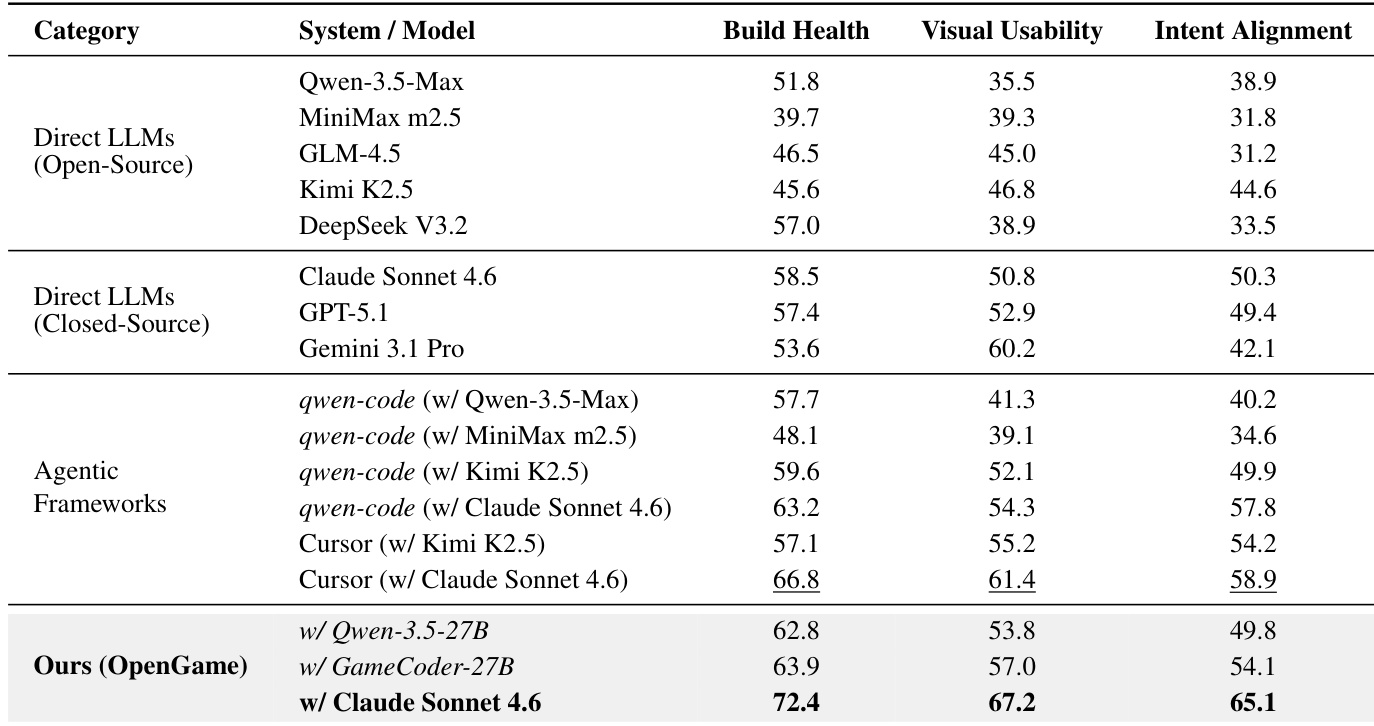

The authors evaluate OpenGame on a benchmark of browser game tasks, measuring performance across build correctness, visual quality, and intent satisfaction. Results show that OpenGame achieves state-of-the-art performance, particularly when using Claude Sonnet 4.6, outperforming all direct LLMs and agentic frameworks on all three metrics, with the largest gains in intent alignment. The framework's structured planning, template-based scaffolding, and iterative verification contribute to its superior performance, especially in physics-centric game genres. OpenGame achieves state-of-the-art performance across all evaluation metrics, outperforming all direct LLMs and agentic frameworks. The largest improvement is seen in intent alignment, indicating better preservation of user-specified game mechanics. OpenGame performs strongest in physics-centric genres like platformers and top-down shooters, with notable performance drops in abstract genres such as strategy and puzzle/UI.

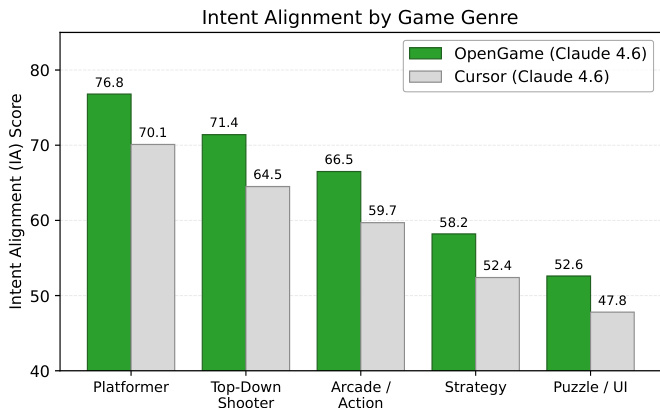

The authors evaluate OpenGame on a benchmark of browser game tasks, measuring performance across build correctness, visual quality, and intent satisfaction. Results show that OpenGame achieves state-of-the-art performance, particularly in physics-centric genres like platformers and top-down shooters, where it significantly outperforms the Cursor baseline in intent alignment. The framework's structured planning and iterative verification contribute to its superior alignment with user-specified mechanics. OpenGame achieves higher intent alignment than the Cursor baseline across all game genres, with the largest gains in platformers and top-down shooters. The framework shows strong performance in physics-centric games but struggles more with abstract genres like strategy and puzzle/UI, where silent logic errors are harder to detect. OpenGame's effectiveness is attributed to its structured planning, template-based scaffolding, and iterative verification pipeline, which better preserve user-specified mechanics.

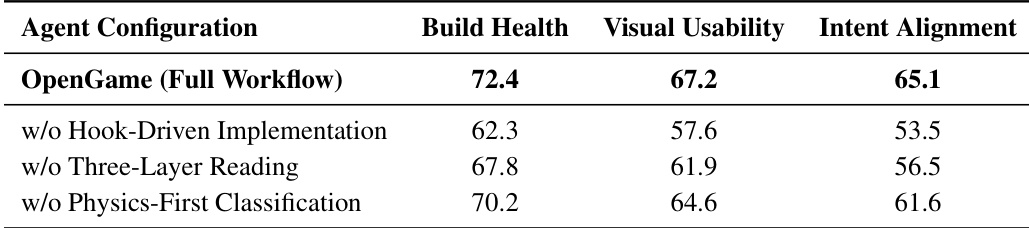

The authors evaluate the OpenGame system by comparing its performance against various baselines and conducting ablation studies to isolate key components of its architecture. The full OpenGame system achieves the highest scores across all metrics, with significant drops in performance when critical workflow mechanisms are removed. The results show that structured planning and iterative verification are essential for maintaining build stability and intent satisfaction in game generation. The full OpenGame system outperforms all ablated configurations across all evaluation metrics. Removing the Hook-Driven Implementation leads to the largest performance drop, especially in Build Health and Intent Alignment. The absence of the Three-Layer Reading Strategy results in a notable decline in Intent Alignment, indicating its importance in managing complex multi-file synthesis.

Through a series of ablation studies and benchmark comparisons, the authors evaluate the contributions of domain-specific training, template architectures, and iterative verification within the OpenGame framework. The results demonstrate that sequential training stages and the integration of evolved libraries with proactive debugging protocols significantly enhance build stability and intent alignment. Ultimately, the full OpenGame system achieves state-of-the-art performance across various browser game tasks, showing particular strength in physics-centric genres due to its structured planning and robust implementation mechanisms.