Command Palette

Search for a command to run...

MiA-Signature: Approximating Global Activation for Long-Context Understanding

MiA-Signature: Approximating Global Activation for Long-Context Understanding

Yuqing Li Jiangnan Li Mo Yu Zheng Lin Weiping Wang Jie Zhou

Abstract

A growing body of work in cognitive science suggests that reportable conscious access is associated with emph{global ignition} over distributed memory systems, while such activation is only partially accessible as individuals cannot directly access or enumerate all activated contents. This tension suggests a plausible mechanism that cognition may rely on a compact representation that approximates the global influence of activation on downstream processing. Inspired by this idea, we introduce the concept of extbf{Mindscape Activation Signature (MiA-Signature)}, a compressed representation of the global activation pattern induced by a query. In LLM systems, this is instantiated via submodular-based selection of high-level concepts that cover the activated context space, optionally refined through lightweight iterative updates using working memory. The resulting MiA-Signature serves as a conditioning signal that approximates the effect of the full activation state while remaining computationally tractable. Integrating MiA-Signatures into both RAG and agentic systems yields consistent performance gains across multiple long-context understanding tasks.

One-sentence Summary

The authors propose MiA-Signature, a cognitively inspired conditioning signal that approximates global activation patterns for long-context understanding via submodular-based concept selection and lightweight iterative refinement, delivering consistent performance gains across multiple long-context understanding tasks when integrated into retrieval-augmented generation and agentic systems.

Key Contributions

- This work introduces the Mindscape Activation Signature (MiA-Signature), a compressed representation that approximates the global activation pattern induced by a query across a semantic memory space.

- The framework instantiates the signature through submodular-based selection of high-level concepts to cover the activated context, optionally refined via lightweight iterative working memory updates to ensure computational tractability.

- Integrating MiA-Signatures into retrieval-augmented generation and agentic systems yields consistent performance gains across multiple long-context understanding tasks.

Introduction

Large language models and retrieval-augmented systems increasingly depend on external memory to handle complex queries, making efficient long-context understanding a critical capability for modern AI applications. Current architectures typically treat memory access as a series of local document retrievals, which implicitly assumes that reasoning only requires a narrow set of directly fetched evidence. This localized paradigm overlooks cognitive science insights showing that human cognition actually depends on a transient, large-scale activation across distributed memory networks that is subsequently compressed into a tractable internal representation. To bridge this gap, the authors introduce the Mindscape Activation Signature, a compressed query-conditioned signal that approximates global activation over a semantic memory space. By leveraging submodular selection of high-level concepts and optional lightweight iterative refinement, the authors equip downstream retrieval and reasoning modules with a holistic semantic context. Integrating this approach into both retrieval-augmented generation and agentic workflows consistently improves performance on long-context tasks while efficiently managing redundant memory stores.

Dataset

-

Dataset Composition and Sources: The authors build their evaluation suite from four established long-context benchmarks drawn from English and Chinese detective novels and narrative texts. The collection includes DetectiveQA, NarrativeQA, NovelHopQA, and NoCha.

-

Subset Details: DetectiveQA features 13 novels grouped into the Miss Marple and Hercule Poirot series for multiple-choice reasoning. NarrativeQA contains 37 books consolidated into 11 series based on shared protagonists or sequential story arcs for open-ended question answering. NovelHopQA assesses multi-hop reasoning over extended novel excerpts, while NoCha handles claim verification across complete novels.

-

Data Usage and Evaluation Strategy: The authors use these benchmarks exclusively for evaluation rather than model training. The paper does not specify training splits or mixture ratios. Performance is measured using accuracy for multiple-choice and claim verification tasks, F1 scores for open-ended responses, and pair accuracy for NoCha. When gold evidence annotations are available, the authors also report Recall@10.

-

Processing and Context Construction: Instead of treating individual novels as isolated documents, the authors merge books from the same franchise into unified multi-volume series. This aggregation expands the retrieval space to include related characters, plot events, and intentional distractors. Each question remains anchored to episode-specific evidence, but the model must navigate the broader merged context. The pipeline also applies dynamic query rewriting and evidence signature refinement to support complex reasoning chains during retrieval.

Method

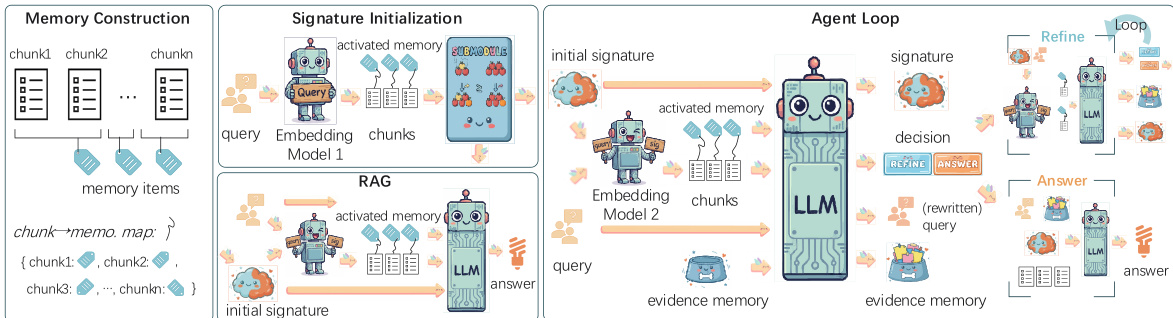

The authors leverage a framework centered on the MiA-Signature, a compact, query-conditioned representation of the globally activated memory region within a structured memory space, referred to as the mindscape. This signature serves as a surrogate for the full activation pattern induced by a query, enabling downstream retrieval and reasoning to access a global memory signal without requiring direct access to the entire activated memory pool. The mindscape is defined as a memory pool M(D) associated with a long source D, where each memory unit mi is grounded in finer-grained evidence. The query-induced activation aq is a function mapping memory units to a relevance score, representing the semantic region of the mindscape that is activated. The MiA-Signature σ∗(q) is derived as a compact subset of high-level memory units H(D), selected to best approximate the activated region Hq, balancing relevance, coverage, and diversity. This signature is not a summary of the source but a global state that coexists with locally retrieved evidence.

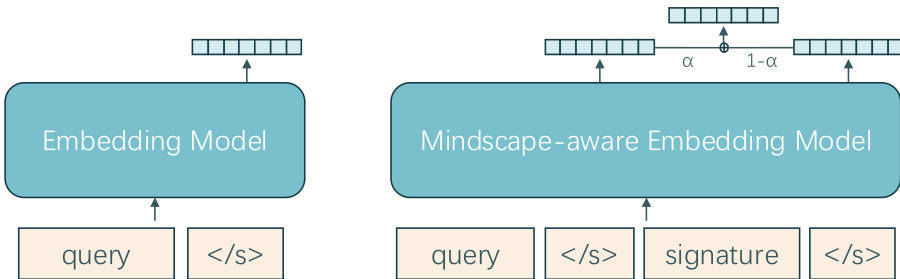

The framework operates through two primary retrieval mechanisms: a query-only retriever E1 and a mindscape-aware retriever E2. The query-only retriever, instantiated by SFT-Emb-8B, provides an initial view of relevant evidence by encoding only the query. In contrast, the mindscape-aware retriever, instantiated by MiA-Emb-8B, conditions its query representation on both the input query and a global memory signal, which is the current MiA-Signature σt. This allows the retrieval distribution to evolve as the signature is updated, enabling the system to track a changing view of the activated memory region. The retrieval score for a candidate evidence unit c is a weighted combination of query relevance and consistency with the signature, s(c∣q,σ)=(1−α)sqry(c∣q)+αssig(c∣σ), where α controls the influence of the global signal.

The MiA-Signature is instantiated differently in static and dynamic settings. In the static RAG setting, the signature is constructed once and used as a fixed conditioning signal. The process begins with a step-0 retrieval using E1 to obtain a broad set of candidate chunks. These are mapped to high-level memory units, forming a summary pool H0(q). The initial signature σ0 is then selected via a coverage-aware submodular optimization that balances query relevance, coverage of the activated region, and diversity among the summaries. This selection is performed using a greedy approximation of the objective F(σ;q,H0(q)). In the dynamic agent setting, the signature is maintained as an evolving global state. It is initialized using the same submodular selection process but is refined iteratively within a loop. At each step t, the agent retrieves evidence using E2 conditioned on the current query qt and signature σt. A state-update model Mupd then processes the retrieved evidence, the current state, and the high-level memory units to update the query, evidence memory, and the signature. The agent continues this process until a decision is made to answer or the refinement budget is exhausted. The final answer is generated by a generator model Mgen using the original query, the latest retrieved evidence, the final signature, and the accumulated evidence memory.

Experiment

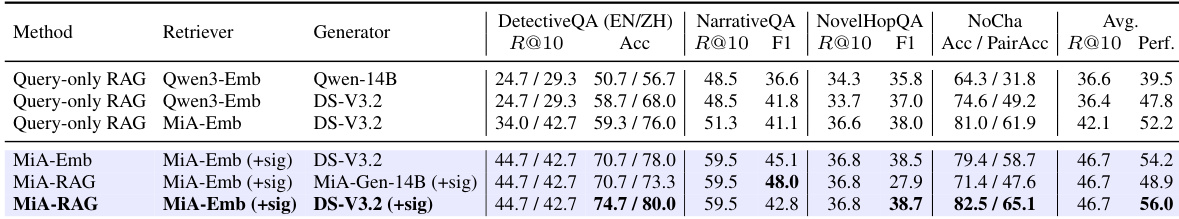

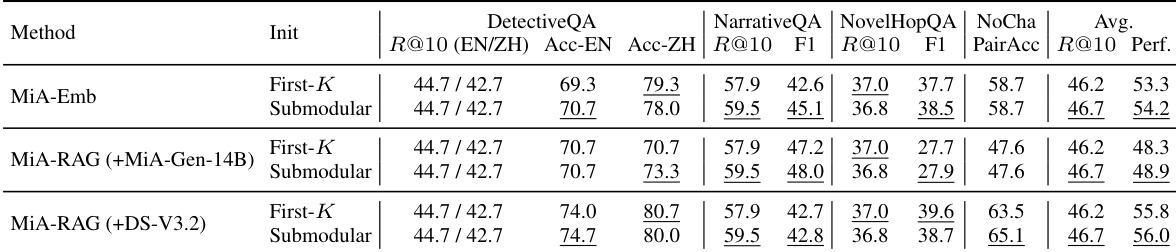

The evaluation tests MiA-Signatures across static RAG pipelines and iterative agent frameworks to assess their utility as compact global conditioning signals and evolving memory states. Experiments validate the interface against multiple baselines, initialization strategies, and query-rewriting controls, demonstrating that the signature effectively maintains global context alignment and preserves critical information bindings across successive retrieval steps. Qualitative analysis reveals that while coverage-aware initialization provides consistent gains for static pipelines, iterative agents leverage simpler initialization through online refinement, and query rewriting proves task-dependent rather than universally beneficial. Ultimately, the findings establish MiA-Signatures as a robust global-structure prior that successfully mitigates semantic interference in overcomplete memory spaces for long-context narrative understanding.

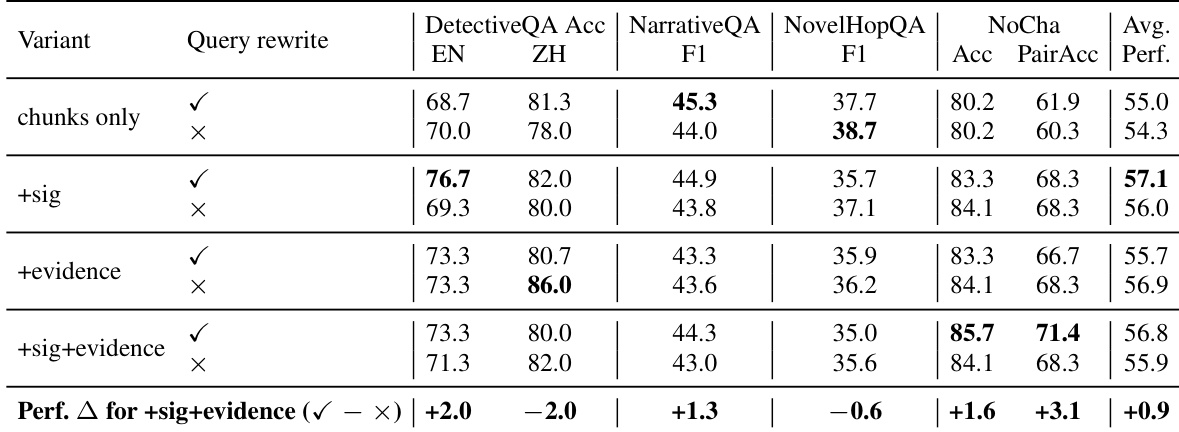

The authors evaluate the impact of different components in a memory-augmented retrieval system, focusing on how query rewriting and the use of a signature and evidence memory affect performance across multiple benchmarks. Results show that combining a signature with evidence memory consistently improves average performance, while query rewriting has mixed effects depending on the task. Using both a signature and evidence memory together leads to the highest average performance across all benchmarks. Query rewriting improves performance on some tasks but reduces it on others, indicating task-dependent benefits. The combination of signature and evidence memory provides the most consistent improvement over baseline configurations.

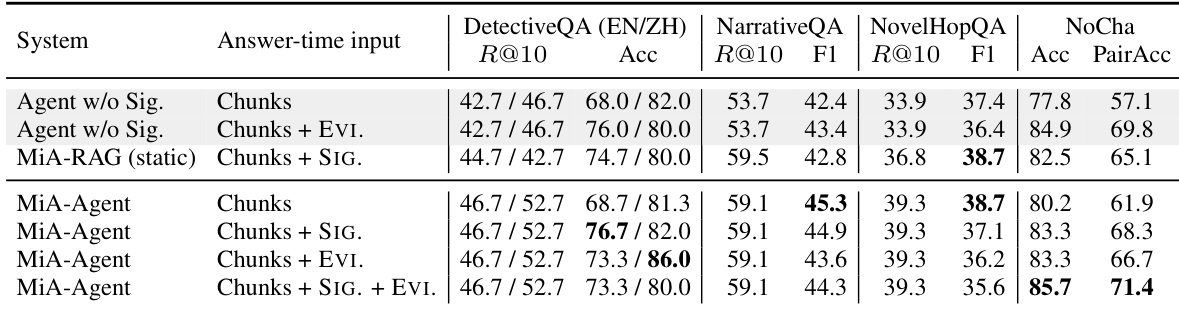

The authors evaluate MiA-Signatures in two long-context memory-access settings: a static RAG pipeline and an iterative agent. Results show that the signature improves performance in both settings, with the most consistent gains observed when the signature is used during both retrieval and generation. The agent setting demonstrates that the evolving signature helps maintain global coherence across retrieval steps, particularly when the answer requires synthesizing evidence from multiple sources. MiA-Signatures improve performance in both static RAG and iterative agent settings compared to baselines without signature conditioning. The evolving signature in the agent setting helps maintain global memory state, supporting accurate answers that require synthesis across multiple retrieval steps. Combining the signature with accumulated evidence memory yields the best performance, indicating that both components contribute to long-context understanding.

The authors evaluate MiA-Signatures in two long-context memory-access settings: a static RAG pipeline and an iterative agent. Results show that the full signature-aware interface consistently outperforms query-only and retrieval-only variants across multiple benchmarks, with the greatest improvements observed in tasks requiring synthesis across dispersed evidence. The evolving signature proves effective as a global memory state that aligns retrieval and generation, particularly in complex narrative scenarios. The full signature-aware interface achieves the highest performance across all benchmarks compared to retrieval-only and query-only baselines. The evolving signature maintains a global memory state that supports alignment between retrieval and generation in iterative agent settings. The signature-based approach shows significant gains in tasks with broad, redundant context where maintaining global coherence is critical.

The authors evaluate MiA-Signatures in two long-context memory-access settings: a static RAG pipeline and an iterative agent. Results show that the signature-aware interface improves performance across various benchmarks, with the full signature-aware system outperforming baseline methods that do not use the signature or use it only for retrieval. The effectiveness of the signature is consistent across different initialization strategies and query rewriting settings, though the benefits vary depending on the task's requirement for global context and evidence synthesis. MiA-Signatures enhance performance in both static RAG and iterative agent settings, with the full signature-aware system showing consistent improvements. The signature improves retrieval and generation alignment, particularly in tasks requiring synthesis across dispersed evidence. Different initialization strategies and query rewriting have varying impacts, indicating that the signature's role is complementary to other mechanisms.

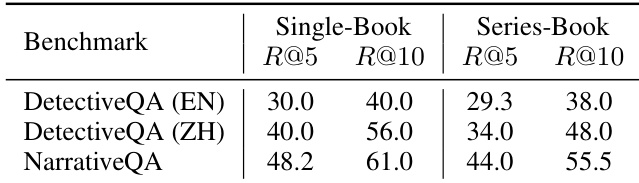

The authors compare retrieval performance under two indexing strategies: single-book and series-book, where books in a series are merged into a single document. Results show that merging books reduces retrieval recall across all benchmarks, indicating that cross-book context introduces semantic interference rather than useful information. This supports the use of the series-book setting as a more challenging test of memory alignment and evidence selection. Merging books into a single index reduces retrieval recall, indicating that cross-book context introduces semantic interference. The series-book setting is more challenging than the single-book setting, as it tests the ability to identify relevant regions in a larger, overcomplete memory space. Retrieval performance drops consistently across all benchmarks when moving from single-book to series-book indexing.

The experiments evaluate a memory-augmented retrieval system across static RAG and iterative agent settings, assessing how query rewriting, signature memory, and evidence memory interact alongside different indexing strategies. Findings demonstrate that combining signature and evidence memory consistently enhances performance by maintaining global coherence and aligning retrieval with generation, particularly for tasks requiring synthesis across dispersed context. While query rewriting yields mixed, task-dependent benefits, the full signature-aware interface remains the most robust configuration. Additionally, merging related texts into a single index degrades retrieval recall due to semantic interference, confirming that the series-book setup provides a more rigorous test of complex memory alignment.